AI in Vehicle Safety Systems - Transforming for Safer Roads

Over one million traffic deaths and between 20 and 50 million non‑fatal injuries are recorded globally per year. Safer driving is a must!

Accidents represent a considerable societal cost in terms of human lives, disability, and economic impact, according to a 2023 World Health Organization Report on Traffic Injuries. ADAS features are crucial in addressing human errors. Such errors contribute to nearly 94% of all automobile accidents, and ADAS systems proactively mitigate these risks through advanced technologies.

The table below summarizes the most recent global figures from the World Health Organization (WHO).

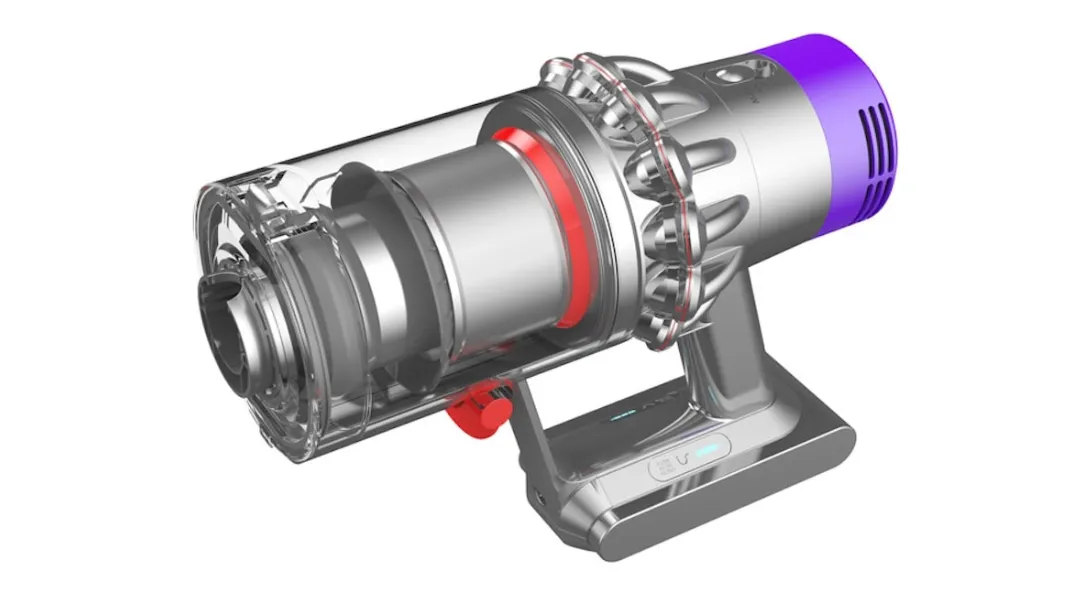

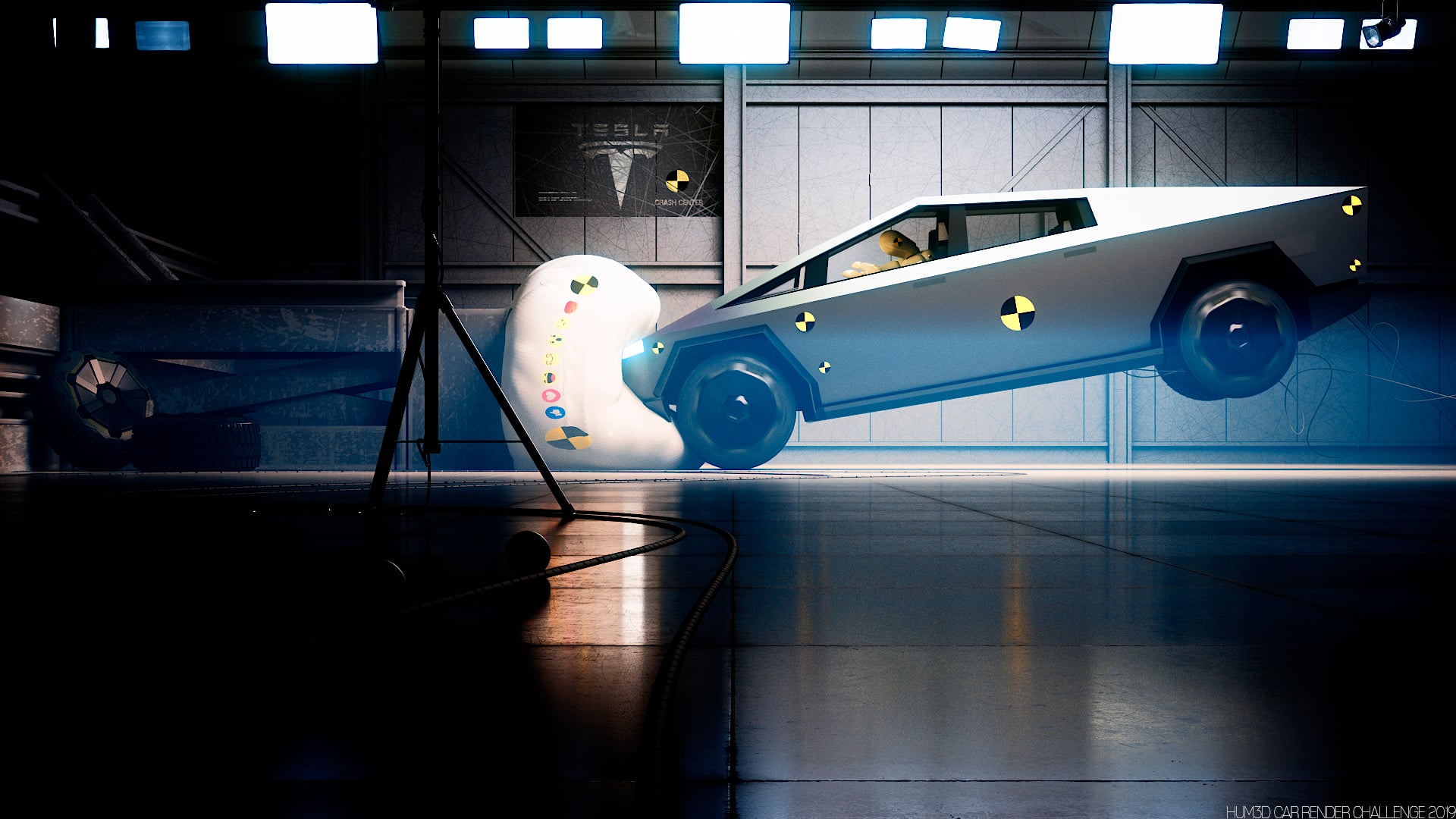

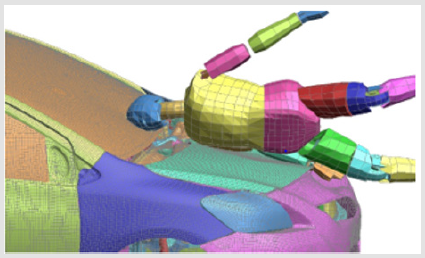

Saving lives is not optional; it addresses a high-impact engineering problem that requires solutions, such as crash testing simulation for automotive design.

Today, AI is used to design vehicles by simulating crash scenarios, analyzing vehicle responses, and optimizing safety features, transforming road safety and leading to safer and more adaptive cars.

Thus, there is a systemic failure in mobility design that engineers can now address with the help of Artificial Intelligence (AI). However, deploying AI in vehicle safety systems also raises ethical considerations.

What’s new with AI?

- Instead of relying solely on rule-based logic, AI processes uncertain, high-dimensional data from sensors in real-time, enabling adaptive responses to scenarios that were not explicitly programmed. Most AI-powered safety systems are proactive approaches, helping prevent accidents through warnings or automatic interventions, such as braking or steering assistance.

- Since approximately 2012, AI has been driven by breakthroughs in deep learning, advances in sensor data fusion, and improvements in computing power. New architectures accelerated capabilities in perception and control, making real-time inference in complex environments possible on board self-driving cars.

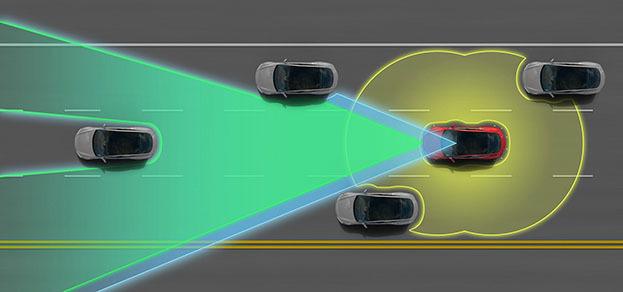

- The most advanced examples of AI transforming road safety can be found in Advanced Driver Assistance Systems (ADAS). Traditional control logic (fixed thresholds for braking or gap‑keeping) is replaced or complemented by adaptive models trained on large datasets of driving scenarios. These models generalize to conditions beyond those explicitly foreseen in the design phase. Traditionally, vehicle safety assessments relied heavily on manual inspections, which were time-consuming and prone to human error. AI-powered systems are now replacing manual inspections to improve accuracy and efficiency in vehicle maintenance and safety checks.

Examples:

- Automatic emergency braking: decisions for car safety are based on the integration of multiple sensors (camera, radar, LiDAR), which combine asynchronous data streams with varying latencies and error models.

- Adaptive cruise control: uses predictive estimation of the lead vehicle’s trajectory.

Understanding how AI differs from human perception and reflexes is essential when designing safety systems. Humans and AI operate with fundamentally different learning mechanisms and limitations.

The following table summarizes these Human Vs. AI differences related to car safety and various traffic conditions :

The AI-driven automotive safety sector is booming, driven by the adoption of ADAS and regulatory initiatives.

The automotive AI market is expected to reach USD 5.22 billion this year, growing at a 23.4% annual rate to USD 14.92 billion by 2030. The global market for advanced driver assistance systems (ADAS) is projected to reach $91.83 billion by 2025, highlighting the growing importance of these technologies.

This guide covers the complete engineering spectrum of car safety, including regulatory deadlines you must meet, crash test ratings, and the technical architectures that help vehicles survive accidents or better prevent them:

- Deep Learning Concepts for Automotive Safety

- The ABC of Car Safety: Foundational Concepts for Engineers

- Distracted Driving: Human Error and AI

- The Role of Computer Vision

- Other AI-Driven Car Safety Aspects

- Data Privacy in the Automotive Industry

- Business Perspective: AI Safety Systems Economics

- Future Directions in AI-Driven Vehicle Safety (Advanced Technical)

Deep Learning Concepts for Automotive Safety

Car safety is increasingly relying on Artificial Intelligence (AI) and deep learning to process data from sensors and make control decisions. Advanced automated technologies analyze real-time data from sensors and machine learning algorithms to optimize vehicle safety.

Application of AI

AI uses models to convert data from cameras, radar, LiDAR, and sensors into actions, surpassing rule-based systems. It learns from past data to detect hazards and adapt responses, helping prevent injuries. AI also predicts vehicle failures by analyzing data early, reducing accidents and breakdowns. AI systems continuously assess the vehicle’s state by analyzing real-time sensor data to evaluate operational status and detect potential issues, thereby improving safety and maintenance practices.

What is Deep Learning?

Deep learning refers to artificial neural networks with many processing layers that learn hierarchical representations of data.

This means:

- Detecting and classifying objects such as pedestrians, vehicles, or obstacles from raw sensor data.

- Predicting dynamic behavior, for example, estimating whether a pedestrian will enter the road.

- Recognizing driving patterns and driver states to detect distraction, fatigue, or drowsy driving, which is a key factor of risk addressed by advanced features in the vehicle.

How Does Learning Occur?

Learning occurs through training on large datasets of driving scenarios.

The process adjusts millions of parameters so that the mapping from inputs (sensor signals, environmental conditions) to outputs (warnings, braking, steering) minimizes prediction error.

This training utilizes supervised learning and requires large-scale computation on GPUs or specialized hardware to effectively detect pedestrians.

AI-driven vehicle inspections further enhance accuracy and operational efficiency by quickly identifying issues that may be overlooked in traditional manual inspection processes.

Which AI algorithms are Best for Vehicle Pedestrian Detection?

Convolutional Neural Networks (CNNs) are the standard for pedestrian detection due to their performance in image classification and object localization.

Fusion methods combine CNN vision with LiDAR/radar data processed by probabilistic filters, thereby improving robustness in the presence of occlusions, poor lighting, and adverse weather conditions. Ensemble models are also employed for critical applications.

More on CCNs

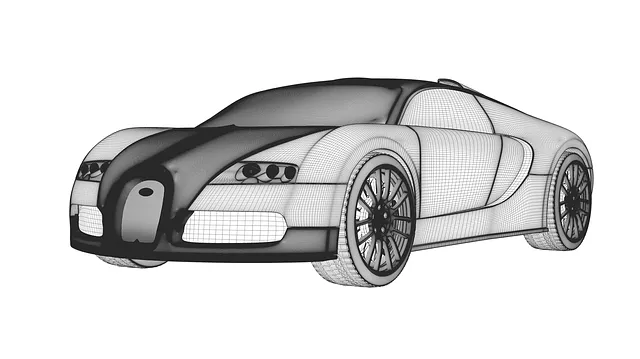

Convolutional Neural Networks (CNNs) process sensor inputs, such as raw camera feeds from vehicles, contributing to the automotive sector by identifying key objects step-by-step.

- First, CNNs apply learned filters across image regions to detect features hierarchically: edges, shapes, and objects.

- Then, they combine these features into more complex ones, such as recognizing a car or a pedestrian.

- Finally, they use this layered information to find and classify objects, transforming raw feeds; for instance, to alert other drivers about obstacles or unsafe conditions, such as fatigue or distraction.

Discover how 3D convolutional neural networks (3D CNNs) enable AI to learn 3D CAD shapes and transform product design in engineering, ultimately focusing on improving safety.

What is the New Paradigm in AI & Vehicle Safety?

The new paradigm is shifting from rule-based control to data-driven control, which adapts to novel situations and improves with the addition of new data.

AI-driven safety features, such as automatic braking, collision avoidance systems ACC, and driver monitoring systems, are enhancing road safety compared to traditional methods by combining sensor data and predictive models to detect risks, warn drivers, and prevent collisions. Collision avoidance systems can reduce collision risk by 25% or more, with automatic emergency braking reducing rear-end crashes by 38%.

For example, a meta-analysis found a 38% reduction in rear-end crashes for vehicles equipped with low-speed automatic emergency braking.

Read how, with AI-driven crash box optimization, engineers achieved 10% better energy absorption and exponentially faster design iterations.

The ABC of Car Safety: Foundational Concepts for Engineers

Before exploring advanced technologies, let’s review the core principles!

Braking Distance and Stopping Dynamics

Maintaining a safe distance between vehicles is crucial for road safety, as it provides sufficient space to react and stop promptly. Stopping distance includes reaction and braking distances. At 100 km/h on dry asphalt, you will travel over 60 meters before stopping, increasing to over 95 meters in wet conditions. Adaptive Cruise Control (ACC) systems help drivers keep a safe distance automatically, reducing the risk of collisions.

Crash Mechanics and Energy Management

Crashworthiness manages kinetic energy, increasing with speed. Modern cars use crumple zones to absorb impact and shield occupants with a rigid cell. Restraints and load paths enhance safety. Survivable crashes occur briefly at 40-50g, depending on impact direction.

Ratings and Testing Protocols

Independent organizations utilize standardized crash tests, giving consumers comparable ratings.

Simulation Systems

Computational tools accelerate crashworthiness development. The main approaches to simulation tools in the automotive industry are summarized in the table.

Read more on the design of innovative crash-resistant solutions.

What is the Safety Hierarchy?

A 5-layer strategy encompasses all stages of an accident, from prevention to recovery. The traditional three-tier model (passive, active, integrated) fits this framework.

- 0. Primary (Design & Prevention)

- Prevents hazardous situations from developing through fundamental vehicle design: visibility optimization, ergonomic controls, intuitive handling characteristics, and driver training programs that promote safe behavior before any electronic systems engage.

- 1. Active (Crash Avoidance)

- Prevents crashes through intervention with features such as ABS, ESC, traction control, and Advanced Driver Assistance Systems (ADAS), including lane-keeping assist, and blind-spot monitoring, which actively help drivers avoid collisions.

- 2. Pre-Crash (Preparation)

- Prepares the vehicle and occupants in the milliseconds before an unavoidable impact: pre-tensions seatbelts, adjusts headrests to optimal positions, closes windows, and positions seats to maximize protection when sensors detect an imminent collision.

- 3. Passive (Crash Protection)

- Protects occupants during a crash through crumple zones, a rigid cell, airbags, and seatbelts that absorb and distribute crash forces, thereby reducing the risk of injury.

- 4. Post-Crash (Rescue & Recovery)

- Facilitates rescue and prevents secondary injuries after a collision: automatic emergency calls, fuel system cutoff to avoid fires, automatic door unlocking for emergency access, and hazard light activation to warn other traffic.

Independent organizations conduct standardized crash tests. Notable examples from the past are summarized in the table:

Distracted Driving: Human Error and AI

Human distraction during driving remains a notable contributor to road accidents. Common distractions include mobile phones, eating, and interacting with in-car technologies.

In the United States alone, distraction was responsible for 3,275 fatalities and approximately 324,819 injuries in 2023.

- In 2022, Texas reported 4,408 traffic fatalities, with distraction as the second leading cause.

- In Colorado, distraction contributed to a notable portion of the 764 traffic fatalities.

AI-Driven Solutions in Driver Monitoring

Several automotive companies have integrated AI-powered driver monitoring systems (DMS) into their vehicles. DMS utilizes cameras and AI algorithms to detect signs of fatigue, distraction, or impairment by analyzing physiological cues and behavioral patterns. These systems help detect signs of distraction and other risky behaviors, such as mobile phone use, alerting drivers to driver fatigue that can lead to drowsiness and a general lack of focus on the road. For instance, AI enables vehicles to detect prolonged eyelid closure and head swaying as signs of drowsiness, ensuring timely interventions to prevent accidents.

- Magna offers a DMS that uses AI to assess driver attention and alert drivers to prevent accidents.

- Caredrive provides a system that monitors driver behavior and provides real-time alerts to enhance safety.

The Role of Computer Vision

Computer vision and AI enable vehicles to perceive their environment by detecting pedestrians, reading signs, and monitoring lanes, enhancing driver assistance and semi-autonomous functions.

Examples in Vehicle Safety

- Pedestrian detection: CV algorithms identify pedestrians in various conditions (day/night, different weather, partial occlusion), enabling automatic braking to prevent collisions from occurring.

- Lane detection: CV detects lane markings and road boundaries to enable lane-keeping assistance and adaptive cruise control.

- Vehicle recognition and tracking: Identifies other vehicles, predicts their trajectories, and supports collision avoidance.

- Traffic sign recognition: Reads speed limits, stop signs, and warnings to inform the driver or autonomous control system.

Beyond these uses, CV supports features like Vehicle-to-Everything (V2X) communication, where the vehicle’s vision system interprets traffic infrastructure and shares data with nearby cars and road systems. CV also integrates with AI modalities to assist drivers. For example, Natural Language Processing enables voice controls, allowing drivers to issue commands without manual distraction.

Tesla Vision

For driver assistance in absence scenarios, Tesla’s AI monitors for drowsiness and has recently begun recommending activation of FSD mode to handle driving tasks when the driver is detected as sleepy or inattentive.

- Tesla’s official Full Self-Driving page describes FSD’s use of cameras for navigation, steering, obstacle avoidance (e.g., bikes, cars), and driver assistance.

- Tesla Support on Autopilot and Vision explains the transition to vision-based systems for occupancy networks in FSD, enabling obstacle detection and avoidance without ultrasonic sensors.

- The Tesla Autopilot Support Page details camera-equipped 360-degree visibility for better protection, including blind spot checks and AI-driven assistance to reduce the severity of accidents.

- A recent WIRED article discusses Tesla’s FSD handling road situations, such as braking and steering, to assist a drowsy driver.s

Other AI-Driven Car Safety Aspects

AI contributes to vehicle safety in multiple areas beyond driver assistance, including maintenance, post-crash response, and virtual testing.

- Predictive Maintenance: AI analyzes data from sensors to identify potential component failures before they occur, preventing breakdowns and accidents. AI-driven diagnostics checks utilize real-time data on the vehicle’s state to ensure optimal conditions. The integration of AI in predictive maintenance reduces operational costs by minimizing downtime and maintenance expenses associated with human intervention.

- Post-Crash Assistance: AI-powered systems can automatically contact emergency services and provide crucial information after a collision. Additionally, AI-driven analysis of vehicle data after an accident offers detailed insights into incidents, aiding in swift claim processing and liability determination.

- Virtual Testing: AI is used to create simulations and virtual environments for testing autonomous vehicle safety, reducing the need for extensive real-world testing.

Data Privacy in the Automotive Industry

AI integration presents a dual challenge: enhancing performance while ensuring privacy, security, and ethics. AI-enabled vehicles act as mobile sensors, producing and processing terabytes of data daily.

What Are the Privacy and Security Risk Factors in AI Datasets?

Datasets are vital for AI tasks such as collision avoidance and driver monitoring, but they also pose significant privacy and security risks. Mishandling sensitive info (driver identity, location, behavior) can breach laws like GDPR and CCPA, which demand consent, data minimization, and erasure rights. Manufacturers and AI developers must ensure safeguards for compliance and trust.

Needed Integrations

From a technical perspective, the previously listed challenges require integration of:

- Secure data pipelines using encryption in transit and at rest, hardware‑rooted security modules, and authenticated access control.

- Privacy‑preserving machine learning (e.g., federated learning, differential privacy) to allow model training without transferring raw personal historical data.

- Explainable AI (XAI) methods to produce interpretable decisions, enabling validation by engineers and regulators.

- Risk‑aware system design integrating critical standards such as ISO 26262 (functional car safety) and ISO/SAE 21434 (cybersecurity).

Types of Data Collected

- Sensor data, including cameras, LiDAR, and radar, capture detailed environmental information.

- Location data: GPS tracks vehicle movement in real time.

- Driver data: Inputs from monitoring systems, including eye tracking and fatigue indicators.

- Vehicle telemetry: Speed, braking patterns, steering inputs, and system diagnostics.

Regulatory Considerations

Regulations such as GDPR and CCPA impose strict data protection rules. Manufacturers must ensure compliance by minimizing data collection, anonymizing data, securing storage, and obtaining informed consent from drivers.

For engineers designing AI systems, the privacy of data is a design requirement. Data protection must be integrated into the architecture of AI systems.

This requires multidisciplinary collaboration between software engineers, data scientists, cybersecurity specialists, and compliance teams.

Business Perspective: AI Safety Systems Economics

AI-driven systems represent significant capital investment, but they’re reshaping automotive business models across the value chain, from manufacturing to insurance underwriting.

As data from these systems becomes part of the business ecosystem, AI enables dynamic pricing models for tolls, parking fees, and insurance costs, utilizing real-time behavioral insights to reduce congestion, increase safety, and personalize insurance policies.

Cost-Benefit Analysis

Implementation costs for AI safety features vary by sophistication:

ROI drivers by stakeholder:

- OEMs: Reduced liability, premium pricing (Top Safety Pick+ ratings), regulatory compliance

- Fleets: 20–30% collision cost reduction, 15–25% lower insurance premiums, 10% fuel savings

- Society: WHO estimates every 1% crash reduction saves $100B globally

Automatic emergency braking alone can prevent up to 50% of rear-end crashes. For a 500-vehicle commercial fleet, comprehensive AI safety systems achieve positive ROI within 18–24 months through reduced claims and downtime.

Dealership and Insurance Operations

- Dealership impact

- ADAS-equipped vehicles result in 30% more service visits for sensor calibration following windshield replacements or collisions. Technicians need costly manufacturer training ($2,000–$5,000) and calibration tools ($15,000–$40,000). Sales staff must clearly explain system limits and OTA updates to support premium pricing.

- Insurance transformationLexisNexis Risk Solutions, in their ADAS Impact on Insurance Claims, shows that vehicles with ADAS features demonstrate:

- a 23% reduction in bodily injury costs,

- a 14% reduction in property damage costs,

- 8% reduction in collision claims versus non-ADAS vehicles.

Fleet Management Applications

AI tools support fleet managers by helping them oversee vehicle maintenance, safety, and driver behavior, which optimizes overall fleet operations. AI enables fleet operators to continuously monitor vehicle performance, providing real-time insights into each vehicle’s status and ensuring timely interventions and efficient management.

Geotab’s analysis shows fleet management systems with AI-driven safety features typically achieve positive ROI within the first year.

Future Directions in AI-Driven Vehicle Safety (Advanced Technical)

- Research Focus: In this advanced session, we will explore the next wave of research and engineering trends that are shaping AI-driven safety systems for autonomous and semi-autonomous vehicles.

- Enabling technologies: As we look at the road ahead, innovations such as connected vehicles, 5G, and IoT are becoming crucial for enhancing safety and enabling smarter decision-making.

- Conceptual goals: These capabilities are paving the way for systems that are adaptive, formally verifiable, and capable of reasoning under uncertainty.

Robust Multi-Modal Sensor Fusion

Current fusion architectures treat sensor modalities independently before late integration. Next-generation systems will employ attention mechanisms and transformer-based architectures to learn cross-modal correlations directly from raw data streams, improving robustness when individual sensors fail or provide degraded information.

Research in neural radiance fields and implicit 3D scene representations may enable more comprehensive environmental modeling from sparse, noisy data.

Continual Learning and Domain Adaptation

Deployed safety systems often encounter unseen scenarios, such as new infrastructure, regional conventions, or extreme weather conditions. Continual learning models, utilizing techniques such as elastic weight consolidation and progressive neural networks, adapt without forgetting prior knowledge. Federated learning allows fleet-wide knowledge sharing without centralizing sensitive data.

Formal Verification and Assurance

Neural networks’ non-determinism complicates certification. Methods combine neural networks with verifiable runtime monitors, where the neural network offers high performance and a verified fallback controller ensures safety. Techniques such as abstract interpretation, SMT solvers, and reachability analysis are used to guarantee the behavior of the neural network within bounded domains.

Spatio-Temporal Risk Modeling

ADAS systems operate within short prediction horizons (2-5 seconds). Advanced planning requires longer-term probabilistic forecasting that captures interaction effects, such as how surrounding vehicles respond to lane changes.

Graph neural networks utilize conditional variational autoencoders to model vehicle interactions and forecast trajectories, enabling more detailed risk assessments. However, their computational complexity limits real-time use.

Simulation and Synthetic Data Generation

Physical validation can’t cover the billions of miles needed to prove improvements for rare events. High-fidelity simulations with learned models and procedural generation enable extensive virtual validation. Techniques such as domain randomization and photorealistic rendering reduce the sim-to-real gap, although closing it fully remains an open research challenge.

FAQs

How does AI improve vehicle collision avoidance systems?

AI utilizes deep learning to integrate data from LiDAR, radar, and cameras for object classification, trajectory estimation, and collision prediction. Unlike rule-based systems, it adapts to dynamic situations, allowing for earlier hazard detection and more accurate control actions, such as braking or steering, thereby reducing delays and enhancing robustness under uncertainty.

What distinguishes AI-based from traditional vehicle safety systems?

Traditional systems use fixed thresholds and hard-coded logic (e.g., braking within a set distance). AI-based systems learn patterns from multiple perception channels, adapting through probabilistic reasoning and neural networks. AI generalizes to unexpected scenarios and improves with new data, unlike traditional systems with fixed, condition-limited performance.

How is machine learning used in car safety systems?

ML trains models on driving data to detect hazards, classify objects, and predict trajectories (including predicting failures in the vehicle system). Supervised learning aids object detection and lane recognition, while reinforcement learning improves decision-making. It also monitors drivers by predicting distraction or fatigue to facilitate interventions, and automatically adapts to changing conditions.

What is the cost of integrating AI safety systems in vehicles?

Sensor arrays (such as cameras, LiDAR, and radar) may cost $300–$3,000 per vehicle, while AI hardware (including edge processors) costs $200–$1,500. Algorithm and dataset development add expense, but mass production reduces costs.

What is AI-enabled adaptive cruise control?

AI-enabled ACC enhances traditional cruise control by leveraging deep learning and sensor fusion, paving the way for future self driving cars technologies. Unlike rule-based ACC, it handles complex situations, such as congestion and merging lanes.

How does V2X enhance AI-driven safety systems?

V2X communication allows vehicles to exchange real-time data with infrastructure, other vehicles, pedestrians, and networks, overcoming sensor limitations in non-line-of-sight scenarios.

Are there infrastructure challenges for V2X?

V2X deployment faces infrastructure hurdles, needing roadside units and standardization. Early smart city and pilot programs have shown a 30-40% reduction in intersection conflicts when V2V and V2I technologies are utilized.