AI R&D: How Artificial Intelligence Is Transforming Research and Development

Imagine slashing R&D timelines by up to 50% and unlocking $600 billion in annual value as AI transforms the research and development process. Over the past decade, AI-driven methods have revolutionized product design, testing, and validation, reducing costs and accelerating breakthroughs, such as drug discovery, from years to months in the biopharmaceutical industry. This revolution extends beyond efficiency, offering C-suite leaders a strategic edge through data-driven decisions, mitigated risks (e.g., 30% fewer failed trials), and a clear roadmap for integrating AI into workflows. Addressing challenges such as data bias and transparency, this guide empowers executives to lead the charge in reshaping industries, from pharmaceuticals to aerospace.

Human welfare has improved gradually throughout history, often over the course of decades or centuries. Innovations such as the steam engine, lasers, and vaccines have driven progress and raised living standards. Today, innovation faces higher costs and greater obstacles, and the rate of return on R&D spending has slowed.

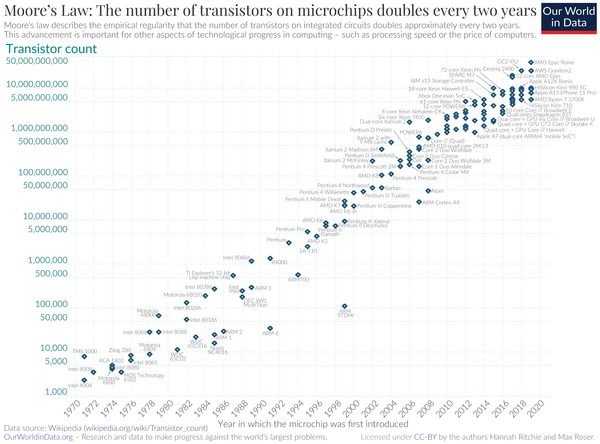

In the semiconductor industry, Moore’s Law (which predicted that the number of transistors would double approximately every two years) is increasingly difficult to sustain. The decline is not in the law itself but in the rate of improvement per dollar of R&D and overall productivity gains.

Artificial Intelligence offers the potential to reverse this slowdown, accelerate discovery, and support economic growth.

Most significant financial impacts of AI in R&D are observed in industries with high R&D intensity (such as pharmaceuticals, software, and semiconductors).

This article, updated with recent developments, highlights the immense potential of Artificial Intelligence in transforming the research process.

The strength of AI lies in its ability to connect development directly with real-world customer data, linking product design, consumer preferences, and performance feedback seamlessly. AI automatically extracts relevant data from texts, speeding up literature reviews for researchers. It replaces intuition and theoretical assumptions with evidence-based, data-driven decisions.

We examine how industries are integrating AI, not only in biopharma but also across materials science and the industrial design process, and why this evolution matters for long-term economic growth.

Recent industry partnerships and consortia confirm the shift from experimentation to full-scale deployment of AI-driven methods. Large pharmaceutical companies are signing multi-hundred-million-dollar agreements and joining data-sharing alliances to train discovery models that leverage AI.

A report by the Boston Consulting Group (BCG) finds that in research-intensive sectors like biopharma and automotive, AI applied in R&D is associated with value-creation proportions of ~27% in biopharma and 29% in automotive.

This signals that AI in R&D has entered a phase of industrial maturity.

Measured effects have begun to appear:

- McKinsey estimates that AI could double the pace of R&D and unlock up to approximately $500 billion per year in additional value from accelerated innovation.⁽¹⁾

- At the same time, a 2025 BCG survey identified that only about 5% of companies achieve measurable value from their Artificial Intelligence initiatives, while around 60% report minimal results, indicating a significant performance gap.⁽²⁾

- However, PwC balances this report, projecting that AI reduces product development lifecycles by up to 50%, depending on the industry and data maturity.⁽³⁾

- Deloitte’s 2024 report, Measuring the Return from Pharmaceutical Innovation, highlights AI’s role in reducing R&D costs by optimizing drug candidate selection, estimating a 20–30% decrease in late-stage trial failures, which translates to savings of over $7.7 billion annually in the pharmaceutical sector.⁽⁴⁾

We envision especially those applications that utilize AI to enhance R&D efficiency, from adopting AI technologies and integrating them into workflows to assessing their outcomes and impact on human researchers.

The logic of AI integration in R&D follows a simple trajectory:

insight → mapping → acceleration

Artificial Intelligence has become a structural component of research and development, generating hypotheses, analyzing complex datasets, and optimizing design and testing models. In many laboratories and engineering departments, Artificial Intelligence now contributes directly to discovery and validation, rather than merely providing analytical support.

Explore the Structure of AI-Driven R&D! Discover how Artificial Intelligence reshapes Research and Development, from knowledge creation to industrial application.

This guide outlines foundational concepts, core technologies, and real-world integrations across industries that adopt AI technologies:

- Understanding AI in R&D

- What Does R&D Mean?

- R&D Case Histories

- What is Artificial Intelligence R&D?

- Is AI Part of R&D?

- What are the Four Core Components of AI in R&D?

- The Rise of Machine Learning in Research Pipelines

- What is Machine Learning?

- What is the Core of Machine Learning?

- What are the Three Types of Machine Learning Methods?

- What is Deep Learning?

- What is a Typical Deep Neural Network?

- What are the Most Common Deep Learning Architectures?

- What are 3D CNNs?

- How Do Machine Learning and Deep Learning Fit into Research Work?

- Role of Computer Vision and Foundation Models

- Drug Discovery Process

- Classical Drug Discovery: Canonical Workflow

- Systemic Problems of the Traditional Pipeline

- Drug Discovery with AI

- Predictive Modeling

- Application Examples

- Generative AI for Molecular Design

- Human–AI Synergy in the Research Pipeline

- Conceptual and Technical Constraints

- Material Science

- Simulation and Data-Driven Design

- Other Sectors

- Classical Product Development Process

- What is the Canonical Product Development Process?

- The Challenges

- Product Development and Artificial Intelligence

- Predictions with Deep Learning

- GenAI or Design Exploration

- GenAI Examples in Design

- Integration with PLM and Real-Time Feedback

- Obstacles and Critical Considerations

- Conceptual Shift in Engineering Design

- How to Implement AI in R&D Teams

- Data, Tooling, and Governance

- People, Skills, and Change Management

- Strategy and Measurement

- How to Measure ROI

- Common Pitfalls and the 30% Rule in AI

- Market Trends and Investment Notes

- Top AI Stocks to Monitor Now

- What Do Stakeholders Ask?

- Future Trends – Conclusion

Appendix – 2025 Nobel Prize in Economic Sciences: Innovation, Growth & Creative Destruction

Understanding AI in R&D

This section introduces the role of Artificial Intelligence within Research and Development for readers in academia, industry, and senior management who study or manage innovation processes.

What Does R&D Mean?

R&D is a structured way to transform uncertainty into knowledge and knowledge into utility. In advanced sectors, such as pharmaceuticals, it serves as the primary engine of competitive advantage. Far from being a cost center, R&D defines an organization’s ability to anticipate, shape, and respond to technological change.

In advanced industries (for instance, in the pharmaceutical industry), it gives a competitive edge by shaping or reacting to technological change.

- Research creates new knowledge by exploring mechanisms, structures, and principles across various fields. Its results are often abstract but valuable for explanation or prediction. Results can be presented in multiple forms, including models, data, proofs, or methods.

- Development turns the above knowledge into practical technologies. For instance, development refines laboratory results into reliable products or validates their performance. Thus, development transforms scientific knowledge into technical capabilities that benefit the innovation process.

- Research + Development. The above difference, however, is not so clear-cut. Modern R&D spans a spectrum from fundamental discovery (long-term, uncertain results) to applied engineering (short-term, targeted goals). R&D encompasses a range of activities, including theory development, experimentation, modeling, prototyping, and validation. Each step feeds into the next in an ongoing, iterative feedback loop. Our goal is to explore how we can leverage AI to make this process even more efficient across various fields, including the pharmaceutical industry and aerospace engineering.

Read a founder’s vision on the immense potential of AI and why AI is Engineering’s last frontier.

R&D Case Histories

Great ideas fuel innovative products from various industries, and research and development is the engine behind them, combining creative exploration with focused progress.

Top R&D teams share a few key habits:

- They keep research and production in sync

- They encourage curiosity while staying aligned with real-world goals

- They give ideas sufficient time to mature and develop.

Whether developing a new drug, a more resilient alloy, or an enhanced mechanical design, the innovation process fundamentally involves posing insightful questions and transforming responses into practical applications.

- Pharmaceutical discovery shows this interaction. The rise of research-based pharmaceutical companies after World War II (e.g., Merck, Pfizer, Roche) combined biochemistry, medicinal chemistry, and clinical testing into organized pipelines. The discovery of penicillin derivatives, beta-blockers, and statins all started as research on biological mechanisms and grew through structured development into influential therapies. Today, the same R&D approach supports computational drug design and biologics manufacturing.

- In materials science, Corning’s investment in glass chemistry led to innovative products, from optical fibers to Gorilla Glass; DuPont’s materials research yielded Kevlar and Teflon. These breakthroughs resulted from long-term research programs linked to scalable engineering.

- In the semiconductor and electronics industry, Bell Labs and Intel demonstrated how fundamental physics could be transformed, with the transistor, laser, and information theory originating in exploratory yet engineering-focused environments. Their cross-disciplinary R&D model remains a benchmark.

- In aerospace and automotive engineering, R&D integrates aerodynamics, materials, and digital simulation to achieve performance beyond incremental improvement. NASA’s early example of computational fluid dynamics (CFD) research was later adopted by leading companies such as Boeing and Airbus. It demonstrates how public R&D can contribute to industrial capability.

What is Artificial Intelligence R&D?

Artificial Intelligence R&D applies AI techniques to accelerate innovation by assisting the research and development process. AI methods generate insights for teams by detecting patterns and correlations that are too subtle or multidimensional for humans to recognize directly.

A concrete example is the collaboration between AstraZeneca and BenevolentAI: using the Benevolent Platform™, the teams were able to leverage AI models to integrate and analyse large sets of literature, genetics, chemistry, and clinical data, resulting in the AI-driven selection of a novel target for chronic kidney disease (CKD) in 2021.

The value of AI lies in revealing functional links between molecular pathways and disease mechanisms that were previously not captured by conventional methods.

It includes areas where significant gains can be achieved through the application of AI tools :

- creating new AI models and AI algorithms,

- integrating AI tools into research processes,

- evaluating AI systems in real projects.

Artificial Intelligence encompasses foundational research, applied projects, and product integration. Teams use it in various ways, such as developing deep learning models for image analysis, training foundation models for text generation, or guiding experimental priorities by highlighting the most promising directions.

Is AI Part of R&D?

AI becomes part of R&D when it is used to generate hypotheses, run simulations, or automate analysis that supports discovery, thanks to AI’s ability to recognize complex patterns and extract correlations from large datasets beyond human intuition.

It is also an R&D activity when teams design new Artificial Intelligence architectures, improve existing models, or develop methods that extend current capabilities.

In essence, Artificial Intelligence belongs to R&D whenever the usage or creation of AI algorithms expands knowledge, improves design efficiency, or delivers new technical functions ready for application.

What are the Four Core Components of AI in R&D?

The four core elements are Data, AI tools, Human Expertise, Governance, and Measurement, as in the table:

Component

Description

Key Point

Data and Data Pipelines

Clean, labeled data is mandatory. Data quality affects model results directly.

Reliable data pipelines ensure accuracy and consistency in AI-driven CAE workflows.

AI Tools and Platforms

Includes training infrastructure, model hosting, and experiment tracking.

Scalable platforms enable efficient model development, testing, and deployment.

Human Expertise

Teams must include domain experts, data scientists, and engineers. Collaboration is essential.

Cross-disciplinary collaboration ensures technical soundness and relevance to CAE.

Governance and Measurement

Policies, reproducibility, and metrics are needed to track performance improvements.

Transparent governance guarantees accountability and continuous improvement of AI models.

The Four Core Components of AI in R&D

A note on scope:

A practical setup of AI in R&D requires clean data, effective tools, and skilled human expertise. While AI provides a competitive advantage to its adopters, it also involves governance and a method for measuring its impact to achieve better performance.

The concept of AI in R&D encompasses more than just code and AI models. It covers the whole product development process, including hypothesis generation and testing of physical prototypes when applicable, as well as automating routine tasks. It supports research processes and product design by automating repetitive tasks and generating insights from large datasets.

The Rise of Machine Learning in Research Pipelines

Machine learning (ML) has become a component of research pipelines. Within AI technologies, ML allows data-rich fields to surpass the limits of manual pattern recognition and empirical intuition. ML integration reshapes how data is analyzed, and also how hypotheses are generated and validated.

ML reduces repetitive human analysis by leveraging AI’s ability to identify patterns in large, complex datasets. This guides smarter experimental or computational choices, letting models predict which experiments will yield the most valuable insights before they’re even run.

What is Machine Learning?

Machine Learning algorithms deduce patterns or functional relationships from empirical data, without explicit task-specific programming.

The central idea of Machine Learning is inductive generalization, which involves using a finite set of observations to approximate an underlying data-generating mechanism.

What is the Core of Machine Learning?

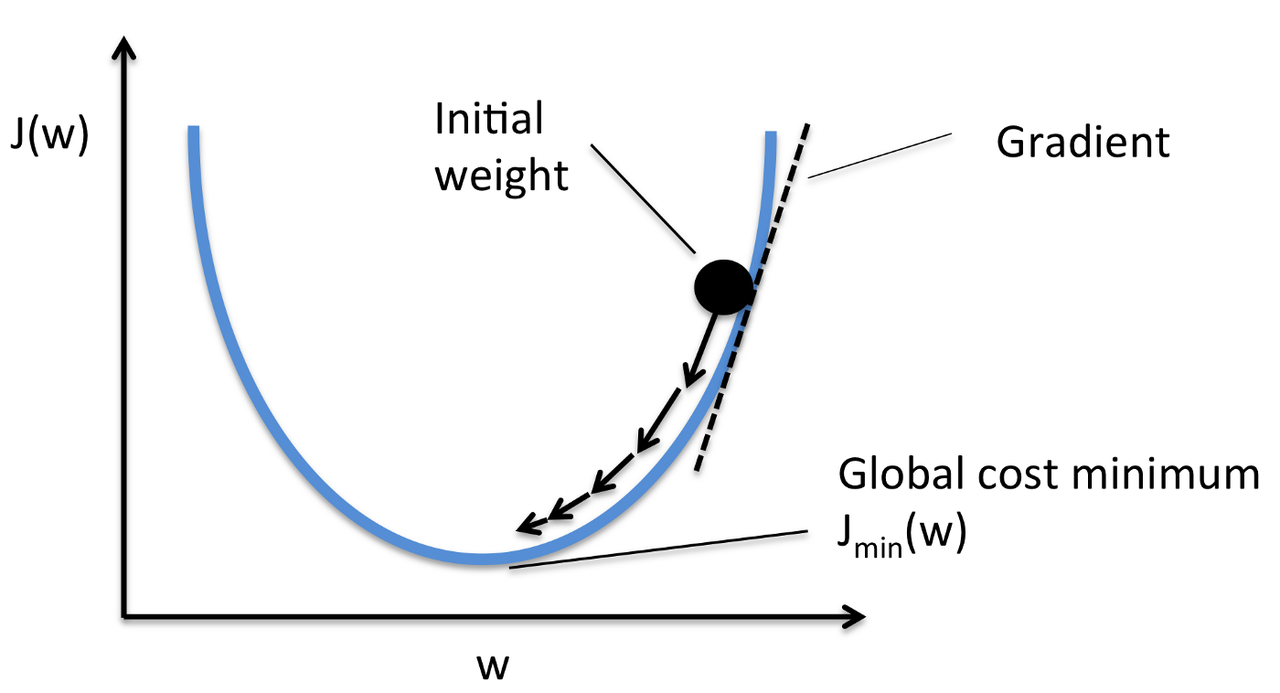

At its core, ML formalizes a process long familiar to empirical science: inferring a model from evidence. ML does so through optimization rather than deduction.

An ML model defines a function f(x; θ) parameterized by parameters θ, mapping input features x to outputs y.

The learning process consists of adjusting parameters θ to minimize a loss function J(y, f(x; θ)), which quantifies the discrepancy between predictions and observed data.

This is an optimization task that often proceeds through gradient-based methods such as stochastic gradient descent.

What are the Three Types of Machine Learning Methods?

ML methods are typically divided into three categories: Supervised, Unsupervised, and Reinforcement learning.

Learning Type

Description

Examples

Key Goal

Supervised Learning

Uses labeled data, i.e., (x, y) pairs, to learn a mapping from inputs x to outputs y

Regression (predicting house prices)

Classification (image recognition)

Predict labels or continuous outputs from inputs

Unsupervised Learning

Learns latent structure in unlabeled data, e.g., clusters or probability distributions

Clustering (customer segmentation)

Dimensionality reduction (PCA)

Discover hidden patterns or structure

Reinforcement Learning

An agent learns through interaction and feedback via rewards or penalties

Robotics control

Game AI (chess, Go)

Learn a policy to maximize reward

Types of Learning

ML does not seek “understanding” in the epistemological sense: its models are judged by predictive validity, not interpretability. However, in scientific contexts, these two goals can align, especially when interpretable models, such as decision trees or sparse regressions, reveal causal or mechanistic insights. The challenge for research is striking a balance between accuracy and clarity, particularly when models become complex and opaque due to their internal intricacies.

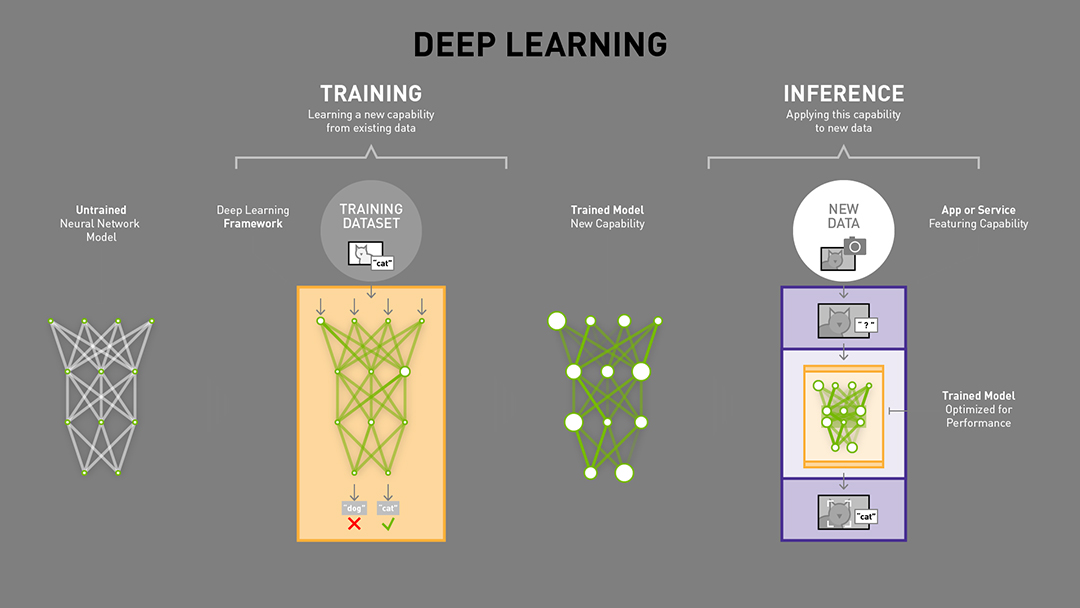

What is Deep Learning?

Deep learning (DL) is a subdomain of Machine Learning that emerged in the past decade. Deep Learning exploits hierarchical representations learned through artificial neural networks. The term “deep” refers to the multiple layers through which data are successively transformed. Specialized layers enable the system to learn increasingly abstract features. In deep learning models, each layer computes a nonlinear transformation of the previous one, allowing complex mappings between inputs and outputs to be modeled without manual feature engineering.

What is a Typical Deep Neural Network?

A typical deep neural network can be described as a composition of functions:

f(x) = fₙ( … f₂(f₁(x, θ₁), θ₂) … , θₙ)

Where:

- n = total number of layers

- fᵢ = function of layer i

- θᵢ = parameters of layer i

Training involves propagating the error between predicted and actual outputs backward through the network ([) and adjusting parameters to minimize the loss function.

The depth and nonlinearity of deep learning networks enable them to approximate virtually any function, a property known as the Universal Approximation Theorem.

However, depth also introduces challenges, namely:

- overfitting

- vanishing gradients

- need for large annotated datasets.

What are the Most Common Deep Learning Architectures?

Deep learning types reflect general ML categories (supervised, unsupervised, reinforcement), but the boundaries blur. Autoencoders and self-supervised systems learn without labels, while deep reinforcement combines neural approximators with policy optimization. Neural network architectures vary depending on data modality, as shown in the table.

Network Type

Description

Examples

Key Strengths

Fully Connected (Dense) Networks

Each neuron connects to every neuron in the next layer, suitable for general-purpose tabular data.

Predictive modeling with structured datasets

Flexibility for diverse data types

Convolutional Neural Networks (CNNs)

Utilize local receptive fields to capture translational invariance in spatially structured inputs.

Image recognition, video analysis

Strong feature extraction for images

Recurrent Neural Networks (RNNs) / Transformers

Designed for temporal or sequential data, modeling dependencies across time or symbolic order.

Speech recognition, language modeling

Sequence modeling and context learning

Graph Neural Networks (GNNs)

Operate on data represented as graphs (nodes and edges), learning relationships between entities.

Molecular property prediction, social networks

Capturing complex relational structures

Neural Network Architectures

What are 3D CNNs?

Among more specialized forms, 3D CNNs extend convolution operations into 3D data, enabling analysis of 3D medical imaging, molecular configurations, or spatiotemporal fluid dynamics.

They slide small, trainable filters across the three dimensions (x, y, z) to capture local spatial patterns and depth-related correlations. This capability is crucial for modeling physical and biological systems. Deep learning excels at representation learning, discovering features that make data linearly separable or predictable. This changes the research cycle: features that once required expert design now emerge automatically from the optimization process.

How Do Machine Learning and Deep Learning Fit Into Research Work?

Machine Learning (ML) and Deep Learning are most effective in research stages where pattern recognition or prediction adds value.

Typical placements are:

- data cleaning and preprocessing,

- feature extraction and representation,

- predictive modeling and scoring,

- prioritization of experiments or tests.

ML models help human researchers by identifying signals hidden in complex data. They free up experts to focus on interpretation and more efficient decision-making processes.

Role of Computer Vision and Foundation Models

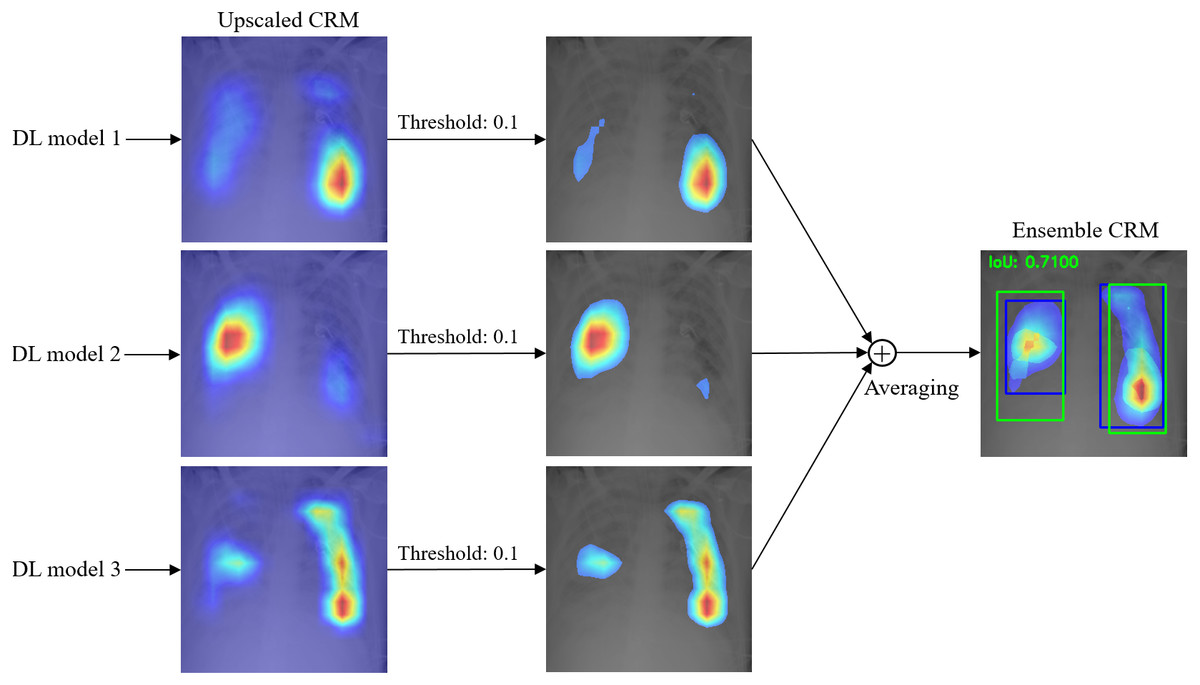

Computer vision and foundation models represent two key pillars of AI research and development. Both support the analysis of complex data and reduce manual workload across various research processes.

- Computer vision processes image and video data using Machine Learning techniques. The system converts pixels into numerical features, detects structures, and classifies patterns. These models learn to recognize defects, shapes, or behaviors after exposure to large annotated datasets.

- Computer vision accelerates quality control, microscope image analysis, and automated inspection. Computer vision helps researchers to simulate complex scenarios and identify patterns that would be invisible to manual review. In life sciences, computer vision supports clinical trials and the discovery of new drugs by analyzing cell structures and tissue responses.

- Foundation models are large-scale AI systems trained on diverse, unstructured data such as text, images, or molecular graphs. Once trained, they can adapt to specific domains through fine-tuning. This flexibility helps researchers to reuse the same model architecture across technical domains with limited data.

- Foundation models improve predictive analytics and generative design. They simplify integration across the product development process. These models extend human expertise by generating candidate ideas, summarizing scientific literature, or creating synthetic data for experiments.

- Foundation models are now a core part of AI R&D because they unify many AI tools under a single adaptable framework; they help organizations implement AI at scale and optimize resource allocation across multiple research pipelines.

Artificial Intelligence applied in Research and Development can bring early, concrete wins in sectors such as life sciences and materials science through data-driven decisions. Those wins come from predictive analytics, simulation, and automating routine tasks.

Areas covered later are:

- Drug discovery and AI-driven innovation

- Boosting discovery in the science of materials

- How product development processes can achieve significant gains through AI-driven tools

Drug Discovery Process

We will first summarize the classic process of drug discovery (and drug development), as follows:

- Classical Drug Discovery: Canonical Workflow

- Systemic Problems of the Traditional Pipeline

Classical Drug Discovery: Canonical Workflow

Drug discovery, in its classical form, is an experimental and iterative enterprise that bridges chemistry, biology, and pharmacology. Its purpose is to identify drug candidates, molecular entities that modulate a biological target associated with a disease, while being safe and efficacious in vivo. Despite its apparent linearity, the process is highly nonlinear in practice, characterized by feedback loops, attrition, and the need for multidisciplinary coordination.

The canonical workflow can be summarized in five interconnected stages:

Stage

Description

Target Identification

Select and validate a biological target (protein, enzyme, receptor) with potential therapeutic impact.

Hit Discovery

Screen large compound libraries (10⁴–10⁶) via high-throughput or fragment-based methods to find active hits.

Hit-to-Lead / Optimization

Chemically refine hits for potency, selectivity, and pharmacokinetics through iterative synthesis and testing.

Preclinical Testing

Test leads in vitro and in vivo for ADMET properties,

assess safety and dosage in animal models.

Clinical Trials

Test in humans (Phases I–III)

<10% of drug candidates reach approval.

Classical Drug Discovery: Canonical Workflow

Systemic Problems of the Traditional Pipeline

The systemic problems of this traditional pipeline are well known:

- Scale of combinatorial space. The theoretical number of drug-like molecules exceeds 10⁶⁰, far beyond any experimental reach.

- Empirical dependency. Each cycle of synthesis–test–redesign depends on physical experiments, making iteration slow and expensive.

- Data fragmentation. Biological, chemical, and clinical data reside in siloed databases, hindering cross-domain learning and knowledge integration.

- Late-stage failures. Toxicity or inefficacy often emerges only during clinical trials, when costs are already sunk.

The cumulative consequence is a high failure rate and rising costs per new molecular entity (NME) exceeding USD 1–2 billion, with timelines spanning 10–15 years. Much of the inefficiency arises from an incomplete understanding of predictive relationships, i.e., how molecular structure translates into biological function and human response. This gap is the space where Machine Learning and generative AI can intervene.

Drug Discovery with AI

In recent years, drug discovery has been reframed as a data-driven inference problem, where molecular behavior is predicted rather than empirically enumerated.

Predictive Modeling

Predictive modeling uses statistical and Machine Learning approaches to approximate complex mappings such as:

- Structure → activity (QSAR: quantitative structure–activity relationships)

- Structure → ADMET properties (ADMET: absorption, distribution, metabolism, elimination, and toxicity)

- Target–ligand interactions

- Genotype → phenotype correlations

The core concept is to derive insights from existing experimental data, such as molecular descriptors, bioassay outcomes, and protein–ligand binding measurements. Model types range from conventional methods, such as random forests and support vector machines (SVMs), to sophisticated deep learning architectures capable of directly examining molecular graphs or three-dimensional conformations.

Application Examples

These methods provide continuous, differentiable approximations of physical interactions, allowing for the efficient screening of millions of compounds in silico before experimental validation.

- Graph neural networks (GNNs) treat molecules as graphs of atoms and bonds, learning chemical context without handcrafted features

- 3D convolutional neural networks (3D-CNNs) can operate on voxelized representations of protein–ligand complexes, directly estimating binding affinity from spatial geometry.

Predictive models accelerate early-stage filtering, identifying likely active chemical compounds, toxic liabilities, or metabolic instabilities. They also assist in multi-parameter optimization, where chemists seek to balance potency against ADMET constraints. While no model is infallible, predictive modeling reorders the workflow: instead of “test everything,” researchers “test what the model deems worth testing.”

Generative AI for Molecular Design

Generative AI extends this paradigm from prediction to creation. Instead of evaluating predefined compounds, it generates novel chemical structures that satisfy predefined constraints. Techniques include:

- Variational Autoencoders (VAEs): learn continuous latent spaces where molecular interpolation is possible.

- Generative Adversarial Networks (GANs): produce molecular graphs that mimic the distribution of known drugs.

- Transformer-based models: trained on SMILES strings (SMILE=Simplified Molecular Input Line Entry System) or 3D coordinates, capable of autoregressively generating syntactically valid molecules.

- Reinforcement Learning (RL): guides molecular generation toward specific objectives such as binding energy, solubility, or patent novelty.

These systems operate in the “chemical latent space,” where optimization is carried out using gradient ascent on predicted reward functions. This approach replaces random trial and error with targeted exploration. Additionally, conditional generative models can focus on specific proteins, disease pathways, or pharmacophores, combining structural bioinformatics and chemistry.

For example, a model can be conditioned on a protein binding pocket (represented as a 3D grid of electrostatic potentials) and tasked with generating ligands predicted to bind with high affinity while maintaining drug-like properties. These molecules are then validated through molecular docking and in silico free energy calculations before being synthesized in the laboratory.

Human–AI Synergy in the Research Pipeline

Predictive modeling and generative AI do not replace the need for lab experiments; they just narrow down the possibilities. This cuts the number of molecules researchers need to synthesize or test. Instead of relying on trial and error, scientists focus on guiding models: defining goals, checking for biases, and selecting the best candidates to move forward with.

The hybrid workflow can be represented as a feedback loop, as shown in the figure:

This adaptive loop, analogous to active learning, continuously refines the model as new experimental evidence accumulates. Over time, the predictive layer evolves into a reusable knowledge substrate, guiding data-driven decisions across multiple projects.

Conceptual and Technical Constraints

Despite progress, AI-based discovery faces its own conceptual and technical constraints:

- Data quality and bias. Models inherit noise and imbalance from the experimental record.

- Generalization. Predictive accuracy declines when extrapolating to novel chemical space or unseen targets.

- Explainability. The molecular rationale behind predictions is often opaque, which challenges regulatory acceptance.

- Integration with wet-lab practice. Actual acceleration requires seamless data exchange between computational and experimental domains.

The trajectory is clear: predictive and GenAI are reconfiguring the economics and epistemology of drug discovery. They facilitate the exploration of chemical universes once inaccessible, transforming medicinal chemistry into a dialogue between algorithmic inference and empirical verification. The result is the extension of human reasoning into dimensions where intuition alone could not enter.

Material Science

Material science studies the relationship between the structure and properties of materials. It examines how atomic composition, bonding, and microstructure influence mechanical strength, conductivity, and durability. The discipline supports industries such as aerospace, electronics, and energy systems.

The classic approach depends on physical testing and empirical data. Researchers synthesize samples, perform experiments, and adjust compositions in a step-by-step process. This process is accurate but slow and resource-intensive. Each iteration requires laboratory work, specialized equipment, and time-consuming analysis. Rising costs and long validation cycles limit exploration. Many promising compounds remain untested because of limited experimental capacity.

Another obstacle is data fragmentation. Results are often stored in different formats or isolated databases. Reproducibility becomes difficult, and knowledge transfer across projects is weak. These issues create barriers to accelerating innovation in the development of new materials.

Simulation and Data-Driven Design

AI Research and Development introduces simulation and data-driven design.

- ML can estimate material behavior before fabrication. GenAI proposes new compositions and microstructures by learning from existing research. This approach reduces the need for repeated physical prototypes, thereby optimizing resource allocation and efficiency.

- Simulation-based discovery enhances research productivity, enhances decision-making processes, and provides access to innovative products that were previously inaccessible due to cost or time constraints.

- AI-driven simulation can predict performance under stress, heat, or corrosion. It helps teams to simulate complex scenarios and identify patterns in atomic interactions. Researchers can filter out low-potential candidates early, saving time and materials.

Other Sectors

AI-driven R&D is expanding into other high-complexity domains that rely on advanced simulation.

- In nuclear fusion, AI reduces computation cycles by predicting plasma behavior and optimizing magnetic confinement parameters. The same approach supports other resource-intensive fields such as climate modeling and advanced materials.

These projects show how integrating AI can accelerate experiments that were once constrained by laboratory testing.

Classical Product Development Process

Modern product development in mechanical and aerospace engineering has undergone significant evolution through digitalization, beginning in the 1980s with the introduction of CAD Technology, CAE, and PLM systems. This transformation has transitioned the process from drafting tables to digital ecosystems. However, it remains constrained by latency, sequential workflows, and local optimization habits.

What is the Canonical Product Development Process?

The canonical product development process follows a well-established logic:

- Conceptual Design

- Engineers translate functional requirements into geometric concepts and designs. CAD tools support rapid 3D modeling of assemblies, parametric surfaces, and tolerances. The goal is feasibility and manufacturability, not yet performance optimization.

- Analysis and Simulation

- The CAD model serves as the input for CAE analyses, including Finite Element Analysis (FEA) for structural behavior, Computational Fluid Dynamics (CFD) for aerodynamics or heat transfer, and Multibody Dynamics (MBD) for motion analysis.

- Each simulation discretizes the physical domain into millions of degrees of freedom, governed by PDEs that are solved numerically. This stage produces quantitative evidence for strength, deformation, fatigue life, and flow efficiency, among other properties.

- Iteration and Optimization

- Engineers modify the geometry, materials, or boundary conditions based on simulation outcomes. This is an iterative process: each change triggers new meshing, solver runs, and post-processing.

- Integration and Validation

- Once subsystems are optimized individually, they are integrated into a complete digital mock-up (DMU) and managed through PLM systems, which track configurations, version histories, and compliance data across the product lifecycle.

The Challenges

The digital chain underpins modern engineering and drives innovation, but it isn’t quick. High-fidelity simulations can take hours or days, needing expert setup, meshing, and monitoring. Engineers sample limited design regions, focusing on plausible variants rather than global optima. Design intuition guides simulations; the process produces data but limits exploration.

Additional challenges include time-consuming processes that stall innovation.

- Computational bottlenecks. High-fidelity CFD or nonlinear FEA models are computationally expensive, and being time-consuming is often incompatible with tight design schedules.

- Sequential dependency. Downstream validation cannot begin until upstream models converge, creating temporal coupling between departments.

- Siloed tools and data formats. CAD, CAE, and PLM systems, although connected, remain distinct silos; feedback from manufacturing or operations is rarely integrated early in the process.

- Manual calibration. Engineers must constantly adjust models to match test data, consuming expert time and introducing subjectivity.

The result is a paradox: despite decades of automation aimed at eliminating time-consuming, repetitive tasks for human researchers, product development remains a slow, iterative process. Its cadence is dictated not by creativity or hardware fabrication speed, but by simulation turnaround time and data interoperability.

Product Development and Artificial Intelligence

Artificial Intelligence, specifically predictive modeling based on deep learning and generative design algorithms, is redefining engineering design. AI helps simulation from being a solver to becoming a learner, which generalizes from prior analyses. Instead of running a new CFD case for each geometry, AI models learn the mapping between geometry and performance. This learning process enables near-instant predictions and design-space exploration at scale. Read how Subaru leveraged AI for automotive development.

Predictions with Deep Learning

ML can approximate the outputs of high-fidelity simulations. The idea is to train a model using existing simulation databases to map as follows:

f(geometry, boundary conditions) → performance metrics

Once trained, this surrogate can replace or pre-screen traditional CAE runs.

In practice:

- Input representation. Geometries can be encoded as structured meshes (for CNNs), point clouds, signed distance fields, or graph-based representations of topology.

- Output prediction. Models predict pressure fields, stress distributions, temperature maps, or scalar quantities such as drag, lift, or displacement.

- Training data. Generated from prior simulation campaigns or parametric studies.

In aerodynamics, a 3D convolutional neural network learns to map wing geometries to lift–drag coefficients by training on thousands of CFD results, enabling predictions in milliseconds instead of hours. Similarly, graph neural networks model stress propagation in structures, acting as “instant solvers.”

Predictive models thus function as digital surrogates, orders of magnitude faster yet sufficiently accurate for preliminary optimization, reducing the resource-intensive nature of traditional methods. Engineers can evaluate thousands of design variants, visualize response surfaces, and identify regions worth detailed CAE analysis. The workflow becomes multi-fidelity: AI models screen the design space, while physics solvers refine the promising candidates.

This shift changes the engineer’s role from manually simulating to orchestrating data generation, model training, and validation, thereby creating an adaptive system that improves with the addition of new data.

GenAI or Design Exploration

While predictive models estimate performance, GenAI proposes entirely new designs.

These models, often based on variational autoencoders (VAEs), generative adversarial networks (GANs), or transformer architectures, operate in latent spaces of geometry, topology, or material distribution. They can synthesize new 3D forms optimized for specific objectives, such as weight reduction, aerodynamic efficiency, or natural frequency constraints.

Generative design typically involves:

- Defining boundary conditions and performance criteria.

- Encoding feasible geometries into a latent space.

- Using generative models to explore that space under optimization guidance (e.g., reinforcement learning or gradient-based search).

- Evaluating generated designs through predictive or high-fidelity models.

The advantage is breadth: thousands of viable designs can be explored autonomously, revealing configurations beyond human intuition.

The model acts not as a search algorithm but as a creative partner, proposing unconventional topologies (often biomimetic or non-intuitive) that still satisfy the constraints.

GenAI Examples in Design

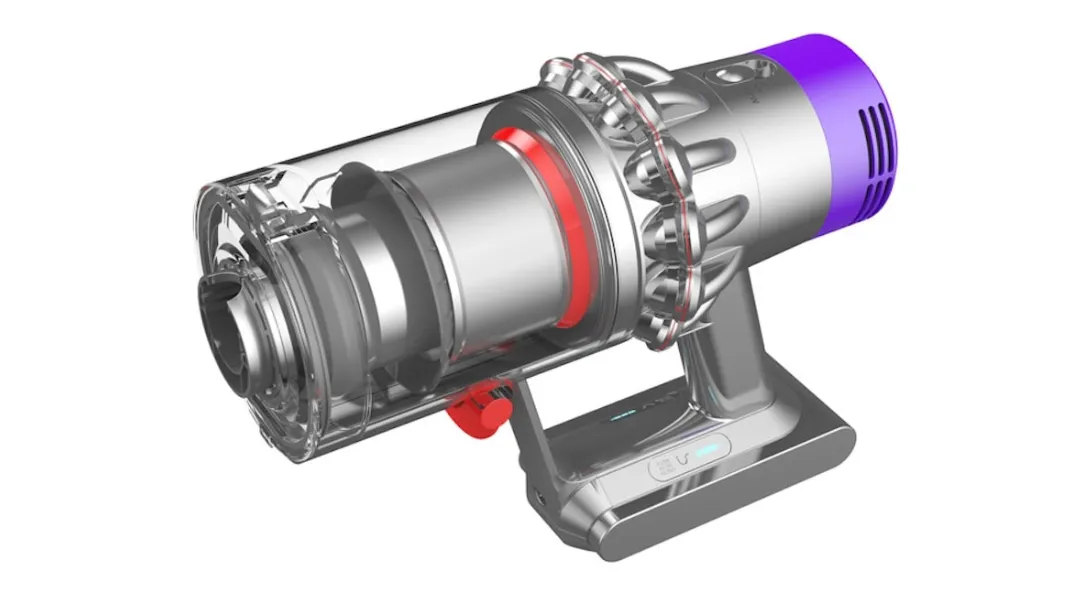

Numerous industries, from aerospace to automotive, leverage AI to enhance product development:

- In aerospace structural design, generative models produce lightweight truss structures tailored to specific load paths, which are later refined through topology optimization.

- In turbomachinery, generative models propose novel blade shapes that strike a balance between aerodynamic and thermal performance.

- In automotive engineering, they assist in designing crashworthy frames that meet stiffness and energy absorption targets simultaneously.

Read more on use cases in generative design.

Integration with PLM and Real-Time Feedback

Once predictive and generative systems are embedded within the PLM ecosystem, product development becomes continuous and data-driven. Design, simulation, and validation form a feedback loop rather than a linear sequence:

- Digital twins continuously collect operational data from sensors.

- Predictive models retrain using this in-service data, updating expected performance envelopes.

- Generative models exploit updated knowledge to suggest incremental design improvements for future iterations.

This feedback architecture transforms PLM from a document repository into a knowledge-learning system. Each iteration enriches the model, reducing design cycle times and enabling concurrent engineering across disciplines.

Obstacles and Critical Considerations

AI-driven design is not without limits. Key technical and epistemic challenges include:

- Training data cost. High-quality simulation data are expensive to generate, especially for 3D transient or multiphysics phenomena.

- Generalization across physics. A model trained on one flow regime or structural configuration may fail to perform well on another.

- Interpretability. Unlike deterministic solvers, AI models can lack traceability of error sources, raising certification challenges in aerospace and safety-critical domains.

- Human oversight. Human researchers must validate AI-generated designs for manufacturability, materials compatibility, and compliance with standards.

Yet, the convergence of physics-based and data-driven models (sometimes referred to as hybrid or physics-informed Machine Learning) is addressing these concerns. By directly embedding physical laws into neural architectures (e.g., through loss functions that enforce conservation equations), AI models achieve both speed and physical plausibility.

Conceptual Shift in Engineering Design

Ultimately, AI introduces a conceptual inversion: in the classical paradigm, simulations follow designs; in the AI-augmented paradigm, designs emerge from simulations that have learned to anticipate results. Human researchers no longer navigate a pre-defined design space but co-create it with algorithms capable of inferring functional patterns across multiple physics and scales.

Engineering shifts from deterministic analysis to guided exploration within learned manifolds where geometry, material, and performance coevolve, marking a profound change. The CAE latency bottleneck (i.e., being time-consuming, from hours to days) is replaced by an era where predictive fidelity and creativity depend more on question quality than computational power.

How to Implement AI in R&D Teams

Research and development need structure before scale, especially when implementing AI. Implementation works best when guided by strong data foundations, transparent governance, and multidisciplinary teams trained in their respective fields.

Data, Tooling, and Governance

Research and Development data is often scattered across instruments, experiments, and legacy systems. The first step is to organize it. Clean, labeled, and accessible data enables reliable models. Tooling should integrate simulation, data analytics, and GenAI platforms within a unified workflow.

Governance defines how data is shared, validated, and secured. Research models must meet reproducibility and compliance requirements. Teams should establish version control for datasets and models, apply metadata standards, and ensure transparency in outputs from AI tools. Good governance transforms experimentation into a repeatable process, rather than a series of isolated trials.

People, Skills, and Change Management

AI in R&D changes roles but does not eliminate expertise and should be supported by management:

- Upskilling programs should focus on data literacy, model interpretation, and prompt design.

- Cross-functional teams work best. Domain experts define hypotheses, data scientists build models, and project managers align these efforts with business goals.

- Early pilot projects demonstrate quick wins and create internal advocates.

- Leadership commitment ensures long-term adoption rather than short-term experimentation.

Read more on collaborative design with AI.

Strategy and Measurement

Strategy aligns AI efforts with business impact. Clear metrics transform experimentation into measurable performance.

How to Measure ROI

ROI in AI R&D depends on time saved, discovery rate, and model accuracy. Instead of counting physical prototypes, teams measure the number of validated ideas per month or the time reduction from hypothesis to proof. A well-governed AI project typically reduces iteration time by 20–40%.

Common Pitfalls and the 30% Rule in AI

AI rarely replaces complete workflows. The “30% rule” suggests that early AI applications automate or enhance roughly a third of R&D activities:

- data preparation

- hypothesis screening

- routine simulation

Beyond that point, returns flatten unless processes are re-engineered. The 30% rule explains the process of retrofitting AI into workflows.

However, an “AI-first” design supports near-total automation in areas such as screening, testing, and data generation. AI-First workflows enable AI to operate at scale, with humans focusing on framing and validation. For well-defined tasks, a 100% automation target is increasingly common.

Market Trends and Investment Notes

The AI market is estimated to be approximately $757 billion in 2025 and is projected to reach around $3,680 billion by 2034. Market expansion supports increased application in R&D sectors. The deep learning segment contributed the largest AI market share of 36.9% in 2023 (figures according to GlobeNewswire, November 7, 2024, quoting Precedence Research).

In its turn, the application of AI tools in Research and Development is becoming a new competitive axis for economic growth. Capital is following this shift, with specialized funds targeting infrastructure, models, and domain-specific AI.

Top AI Stocks to Monitor Now

Investors follow firms enabling industrial and scientific AI adoption:

- Nvidia (GPUs),

- ASML (semiconductor lithography),

- Microsoft and Amazon (AI cloud platforms),

- Specialized players who integrate AI into design and simulation.

These companies are at the forefront of the next wave of R&D automation.

See how to integrate Engineering AI with NVIDIA Omniverse Blueprint.

What Do Stakeholders Ask?

Executives and investors ask: how soon can AI deliver measurable research acceleration? Regulators ask how results are validated. Customers ask about transparency and intellectual property. Teams that anticipate these questions build trust early and gain a market advantage.

Future Trends - Conclusion

AI is transforming R&D from an experimental to an analytical discipline.

The convergence of simulation, data, and generative models will define the next decade of discovery: future R&D workflows will combine virtual design, real-time experimentation, and autonomous optimization.

- Predictive models will pre-screen thousands of chemical or structural variants before the prototype is even created.

- Laboratory automation will close the loop between simulation and fabrication, enabling experiments to continuously feed models.

- Digital twins of materials, drugs, and devices will evolve into learning systems with immense potential. Instead of describing what happens, those AI-driven systems will propose what to test next. Researchers will shift from manual trial design to hypothesis orchestration.

- Multimodal foundation models will integrate text, image, and numerical data in a single reasoning framework to accelerate innovation. Models will summarize literature, interpret microscopy images, and predict mechanical performance within a single pipeline. AI copilots for scientists will become as common as CAD tools are now for engineers.

- The boundary between design, simulation, and experimentation will become increasingly blurred. Companies with structured data, solid governance, and trained personnel will lead the way. Those who treat AI as a generic automation layer will lag.

- The next wave is about reasoning, i.e., embedding scientific intuition into digital systems that learn and adapt. AI R&D will not replace curiosity, but it will make it scale.

FAQ

What are the best practices for managing the AI R&D process?

Successful AI R&D relies on structure and iteration. Data needs curation, versioning, and traceability. Governance should clarify ownership of datasets, models, and outcomes. Teams start with small pilots to prove value before scaling. Continuous validation ensures models remain accurate as data evolves. The best R&D combines domain knowledge, explainability, and precise documentation for reproducibility.

What are the key AI use cases in healthcare R&D?

In healthcare, AI supports drug discovery, medical imaging, and predictive diagnostics. Models analyze biological data, generate molecule candidates, and detect anomalies in scans faster than traditional review.

What are the key AI use cases in finance?

In finance, AI drives risk modeling, fraud detection, and automated reporting. Predictive analytics improves credit scoring and portfolio management. Finance utilizes AI to handle vast, high-dimensional datasets where patterns exceed human detection capabilities, thereby providing an insurmountable competitive advantage when applied to real-time decision systems, such as algorithmic trading.

How can companies measure the ROI of an AI project in R&D?

ROI can be measured through time reduction, model accuracy, and discovery yield. A strong indicator is the number of validated insights or prototypes generated per project cycle. Other metrics include faster simulation runs, lower physical testing costs, or shorter time-to-market. ROI improves when AI outputs are integrated into workflows, rather than isolated as experiments.

What is the ideal organizational structure for an AI R&D team?

A strong R&D team blends scientific, technical, and managerial skills, with data engineers, domain experts, and AI specialists working in quick, iterative cycles. A project lead aligns tech with business, ensuring governance, compliance, and security. Small, independent teams with clear roles foster innovation through agility and accountability.

How can generative AI accelerate innovation in R&D?

GenAI accelerates idea creation, design, and experimentation, enhancing data-driven decision-making. It produces candidate molecules, materials, or geometries based on specific goals, eliminating the need for manual trials. It also generates synthetic data to fill gaps, thereby reducing the need for costly tests.

What are the main risks of adopting AI in R&D?

Main risks include data quality issues, overfitting, interpretability concerns, technical debt, and overestimating automation benefits. Poor datasets mislead models, causing unreliable results. Lack of explainability delays regulation approval and team adoption. Deploying models without monitoring or version control raises technical debt.

Appendix - What is the Basic Difference Between AI and Human Reasoning?

AI computes patterns from data, bound by statistical inference.

Humans deduce from principles and flexibly integrate reasoning modes.

AI Reasoning

- Inductive: Infers general rules from specific data, e.g., ∃x(P(x) → Q(x)).

- Relies on statistical patterns in datasets, often via ML (e.g., neural networks).

- Predicts outcomes based on probabilities, not explicit logical rules.

- Limited by training data and lacks contextual flexibility.

Human Reasoning

- Deductive: Derives specific conclusions from general premises, e.g., ∀x(P(x) → Q(x)), P(a) ⊢ Q(a).

- Example: “All swans are white; this is a swan; therefore, it’s white.”

- Combines induction and deduction, adapting across domains with intuition.

- Reasons beyond data, using principles and creative insight.

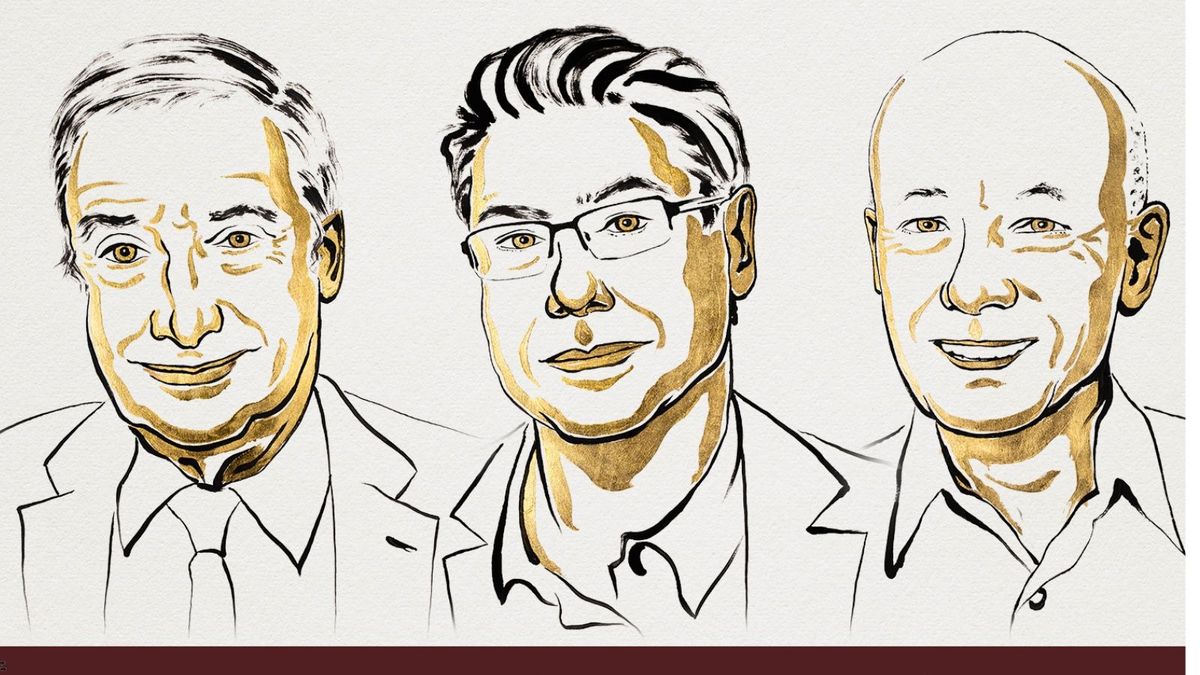

Appendix - 2025 Nobel Prize in Economic Sciences: Innovation, Growth & Creative Destruction

In 2025, the Nobel Prize in Economic Sciences was awarded to Joel Mokyr, Philippe Aghion, and Peter Howitt for their work on how technological innovation drives economic growth.

- Mokyr earned half the prize for showing that societies need both practical and propositional knowledge for growth, which must reinforce each other.

- Aghion and Howitt shared the other half of their theory of creative destruction, formalized in 1992, which explains how companies invest in better methods and products, displacing older firms and fostering innovation and growth.

Mokyr emphasizes the importance of societal openness, institutional support, and overcoming resistance, addressing challenges such as governance, regulation, investment, and sharing benefits. The creative destruction framework illustrates that innovation replaces existing methods, thereby risking disruption. AI in R&D accelerates discovery and obsolescence, increasing the need for effective policies and strategies.

Quoted Reports from the Introduction

(1) McKinsey & Company, The Next Innovation Revolution Powered by AI (2024).

(2) BCG, AI Value Realization Survey (2025)

(3) PwC, AI Predictions Report (2024).

(4) Deloitte, Measuring the Return from Pharmaceutical Innovation (2024)

Further Bibliography (Textbooks & Publications)

Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press. This foundational text covers deep learning models, including neural network architectures (e.g., CNNs, RNNs) and their mathematical underpinnings, as discussed in the article’s sections on ML and Deep Learning in R&D (e.g., 2.4–2.6).

Russell, S., & Norvig, P. (2020). Artificial Intelligence: A Modern Approach (4th ed.). Pearson. Encyclopedic resource on AI, including ML, computer vision, and AI algorithms, pertinent to the article’s discussion of AI’s integration into research processes (e.g., 1.3, 2.8).

Topol, E. J. (2019). Deep Medicine: How Artificial Intelligence Can Make Healthcare Human Again. Basic Books. Focuses on AI’s role in healthcare, aligning with the article’s sections on the biopharmaceutical industry and predictive analytics (e.g., sections 3 and 4).

Ashby, M. F., & Johnson, K. (2014). Materials and design: The Art and Science of Material Selection in Product Design (3rd ed.). Butterworth-Heinemann.

Chollet, F. (2021). Deep Learning with Python (2nd ed.). Manning Publications.

Davenport, T. H., & Ronanki, R. (2018). Artificial Intelligence for the Real World: Don’t Start with Moon Shots. Harvard Business Review Press.

Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

Ulrich, K. T., & Eppinger, S. D. (2020). Product Design and Development (7th ed.). McGraw-Hill Education.

Daugherty, P. R., & Wilson, H. J. (2018). Human + Machine: Reimagining Work in the Age of AI. Harvard Business Review Press. It examines the synergy between human expertise and AI, particularly in the context of human–AI collaboration in R&D and the role of human researchers (e.g., Chapters 4.4 and 8.2).