AI in Civil Engineering: Applications & Advancements

Artificial intelligence is fundamentally altering civil engineering practice. AI applications augment physics-based simulation tools, such as Finite Element Analysis (FEA), allowing civil engineers to develop predictive models and extract insights from decades of historical project data that would otherwise remain hidden in archives.

The real advancement for civil engineering firms is not simply “automation”; it is rather AI’s ability to predict KPIs relevant to civil engineers. Prediction is possible thanks to Machine Learning and Deep Learning models trained on historical failure cases. Models can, for instance, identify structural vulnerabilities months before traditional inspection cycles would catch them. On a critical civil engineering mission, project managers gain a temporal advantage that translates into preventing failures and saving lives.

Explore the full spectrum of AI applications in structural engineering and smart city development - where it stands today, how it drives design and decision-making, and what’s next for information-based infrastructures:

Current State of AI Implementation

- Core Application Domains

- Technical Foundations

- Key Applications in Practice

- Computational Fluid Dynamics Applications

- How is AI Helping Project Management?

- Smart Cities Development

- Technology Alignment

- Ethical Considerations

- Future Directions

Current State of AI Implementation

An assessment of Artificial Intelligence deployment across the civil engineering sector reveals significant variability:

- Design Phase: Generative design now routinely explores solution spaces containing thousands of viable configurations. The MX3D bridge in Amsterdam exemplifies this approach: AI-driven topology optimization reduced material usage by 30% while maintaining structural performance under dynamic loading. Crucially, sensor networks embedded during fabrication create a digital twin that continuously validates design assumptions against real-world behavior.

- Regulatory Reality: Despite technological capability, building codes mandate civil engineers’ judgment as the final authority. Artificial Intelligence generates candidates, whereas licensed professionals verify compliance. This human-in-the-loop requirement isn’t a temporary constraint since it reflects the profession’s accountability structure.

- Performance Envelope: Current Artificial Intelligence excels at pattern recognition within its training distribution but struggles with novel scenarios. A neural network trained on temperate-climate structures will underperform when applied to arctic conditions unless the training dataset explicitly includes similar environments.

Core Application Domains

Modern learning methods are becoming part of daily workflows. Their reach now spans from material behavior to the management of entire assets. Buildings, bridges, and networks are no longer static records but evolving sources of information. This chapter highlights where these approaches deliver measurable improvements from risk assessment through pattern recognition in inspections to predictive modeling across design, execution, and operation.

Evolution from Heuristics to Learning Systems

Early “AI” in civil engineering consisted of rule-based expert systems that codified the decision-making processes of senior civil engineers. These systems failed when confronted with edge cases outside their programmed logic.

Modern approaches transition from expert-defined logic to outcome-based modeling.

For instance, a neural network learns what crack patterns precede material spalling not from coded heuristics, but from thousands of labeled inspection images. This sensor-informed approach captures subtleties that resist explicit formulation.

From isolated optimization tasks in the 1990s, we can now access integrated workflows where AI processes continuous streams from IoT sensors, satellite imagery, and management platforms. The result is an evolution from reactive (after-the-fact) to predictive maintenance.

Data Science

This discipline provides the methodological backbone for predictive models and AI applications in our field. It synthesizes statistical inference, computational methods, and domain expertise to transform raw measurements into informed decisions for civil engineers, as summarized in the table below.

Machine Learning: Pattern Recognition at Scale

Machine learning uncovers statistical relationships without requiring explicit programming of the rules. For civil engineers, this is particularly valuable when working with systems that involve numerous interacting variables, exhibit nonlinear responses that are not proportional or predictable in simple terms, and cannot be fully captured through exact analytical formulas.

- Practical Implementation

- An ML model trained on vibration response from healthy bridges learns the nominal frequency spectrum. When deployed on new structures, deviations from this learned baseline flag potential damage. The engineer doesn’t program “if frequency drops by X%, suspect cracking”: the model simply infers this relationship from measured responses.

- Requirements

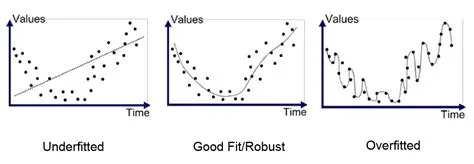

- Practical ML demands substantial, high-quality datasets. Training a crack detection model requires thousands of labeled images spanning different material mixes, ages, and loading conditions. Insufficient information leads to models that memorize training examples rather than learning generalizable features: this problem is called overfitting (see figure).

- Human Judgment Remains Critical

- Machine Learning models predict; they don’t explain the mechanism. When a model flags unusual foundation settlement, the engineer must still diagnose whether the cause is consolidation, poor drainage, or structural overload. The model accelerates detection, but it doesn’t replace geotechnical analytical formulas.

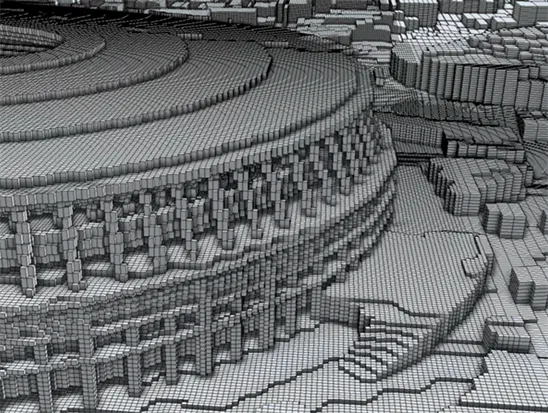

Deep Learning: Neural Networks for Complex Patterns

Deep learning employs artificial neural networks with multiple processing layers to learn hierarchical representations. Each layer extracts increasingly abstract features: early layers might detect edges in images, middle layers recognize textures, and deep layers identify patterns of cracks.

- Architectural Considerations: Convolutional neural networks (CNNs) excel at processing spatial information, such as images and finite element meshes. Recurrent neural networks (RNNs) and transformers handle sequential information from structural monitoring time series. Graph neural networks show promise for analyzing structural topologies where connections between elements matter as much as the elements themselves.

- Computational Requirements: Training deep networks demands significant GPU resources. Training a high-accuracy damage detection model may require days on high-end hardware. Inference (applying the trained model) is far cheaper, often running on edge devices at building sites.

- Application Scope: Deep learning is most effective where pattern recognition from raw inputs is required and mechanistic models are impractical. Examples include automated visual inspection, load forecasting from historical traffic patterns, and surrogate modeling of computationally expensive simulations.

- Limitations: Deep networks function as “black boxes,” making it difficult to understand why a model makes a specific prediction. For critical decisions, this opacity creates regulatory and liability challenges. Techniques like attention mechanisms and gradient-based attribution methods provide partial explanations but don’t fully resolve the interpretability problem.

Computer Vision and Immersive Technologies

Computer Vision (CV) algorithms transform visual representations into quantitative information:

- Defect detection: Trained CNNs achieve 95%+ accuracy identifying concrete spalling, steel corrosion, and pavement cracking from drone imagery, matching or exceeding human inspector performance

- Progress monitoring: Time-lapse analyses automatically track construction progress against schedules, generating S-curves without manual entry

- Safety compliance: Real-time video analyses detect PPE violations (missing hard hats, improper harness use) and trigger alerts

- Photogrammetric reconstruction: Structure-from-Motion (SfM) techniques generate dense 3D point clouds from UAV imagery, enabling volumetric calculations and deformation analyses

Technical Reality: CV systems struggle with varying lighting conditions, occlusion, and surface contamination. A model that performs well on clean concrete may fail when surfaces are wet or shaded. Robust deployment requires extensive validation under field conditions.

Virtual Reality (VR)

VR enables immersive interaction with digital models:

- Design review: Stakeholders walk through BIM models at 1:1 scale, identifying spatial conflicts invisible in 2D drawings

- Buildability: Project sequences are simulated virtually, revealing logistical constraints before equipment mobilization

- Training: Operators practice complex procedures (crane operation, confined space work) in risk-free virtual environments

VR hardware remains expensive and cumbersome for daily use. Most firms deploy VR selectively for high-value activities rather than routine workflows. The technology augments but doesn’t replace traditional visualization methods.

Key Applications in Practice

Modern analytical tools now span every phase from material behavior to asset management. Those tools connect physical observation with computational modeling. Thus, decisions are supported by continuous evidence, including real-time data rather than guesswork and assumptions.

The following sections illustrate how these methods enhance forecasting, automate verification, and facilitate safer, more resilient designs and operations.

Predictive Modeling Foundation

Predictive modeling encompasses any computational method that forecasts future system behavior. In civil engineering, this ranges from physics-based FEA simulation to pure ML models to hybrid approaches combining both FEA and ML.

Quality Control Automation: CV-based inspection systems scan every weld on steel structures, flagging defects with superhuman consistency. Unlike human inspectors, softwares doesn’t suffer fatigue-induced error rate increases over shift duration.

Communication Enhancement: Natural language processing (NLP) tools extract key information from daily reports, automatically updating dashboards. Large language models assist in drafting technical specifications, though human review remains mandatory.

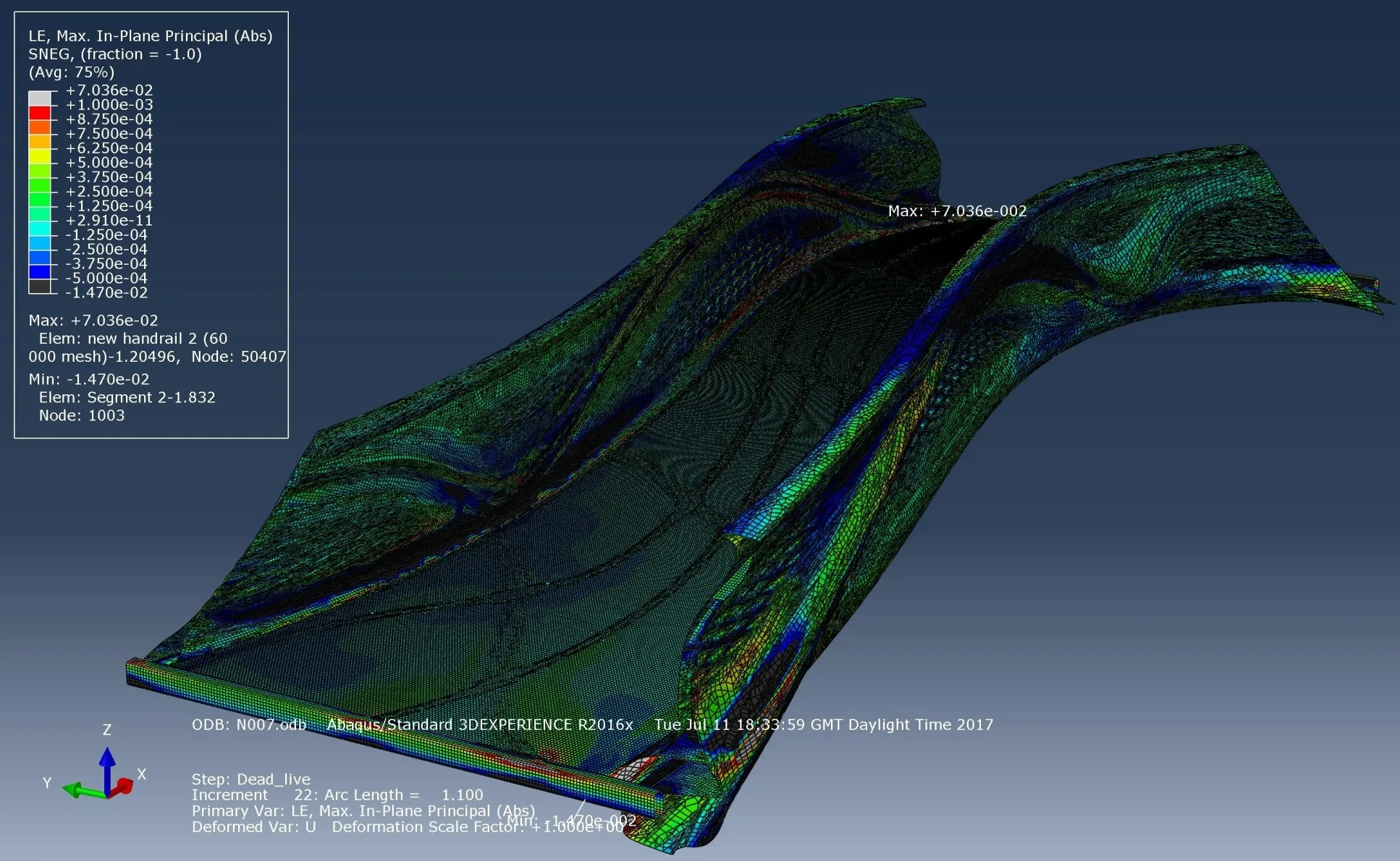

FEA: Physics-Based Prediction

FEA works by dividing a continuous structure into numerous small, interconnected pieces called elements. Each element follows the laws of mechanics, including stress, strain, and deformation. However, solving these relationships for the entire structure analytically is impractical in real-world scenarios. Instead, computers combine the behavior of all elements and numerically solve the extensive system of equations that result, predicting how the structure will respond under various loads. This approach, developed by researchers and adopted by designers during the 1980s and 1990s, is grounded in physics and yields results that can be directly interpreted using principles of construction materials and mechanics.

Core Capabilities:

- Stress distribution under static and dynamic loading

- Modal analyses for natural frequencies and mode shapes

- Buckling stability

- Thermal and hygroscopic effects

- Material nonlinearity (plasticity, creep, cracking)

- Contact and friction between components

Material Behavior Modeling:

- FEA simulates complex constitutive behavior. For example, cementitious cracking and crushing, steel yielding and strain hardening, soil consolidation, and liquefaction. Accurate material models require extensive laboratory characterization and testing to ensure their accuracy and reliability. Models validated against experimental results become reliable predictors for full-scale structures.

Seismic Performance:

- FEA enables nonlinear time-history analyses where structures are subjected to recorded ground motion accelerograms. Engineers identify which components enter plastic deformation, where collapse mechanisms form, and how structural modifications improve seismic resilience. Also, FD (finite differences) methods can be applied.

Geotechnical Applications

Geotechnical engineering is adopting machine learning and IoT-based sensing to analyze soil behavior, monitor ground movement, and anticipate instability. ML models trained on site investigation data, such as borehole and penetration test results, predict subsurface properties and deformation trends, while sensor networks provide continuous field feedback. The combination enables earlier detection of anomalies and more reliable, data-driven risk prevention than traditional periodic surveys.

- ML-Enhanced Site Characterization: Neural networks trained on CPT (Cone Penetration Test) and borehole logs predict soil stratification across sites with sparse direct measurements. These models interpolate between boreholes more accurately than traditional kriging when trained on regional geology.

- Real-Time Monitoring: IoT-connected inclinometers and piezometers stream readings to cloud platforms where anomaly detection flags unusual movements or pore pressure changes. For slopes and excavations, this early warning system enables intervention before instability progresses to a point of failure.

- Risk Assessment: ML models trained on historical landslide inventories identify high-risk terrain by correlating slope angle, soil type, precipitation, and land use patterns. These predictive maps inform land-use planning and prioritize investments in stabilization.

AI-Augmented Planning

Schedule Optimization: Artificial Intelligence planning methods analyze historical project data to generate realistic schedules accounting for weather patterns, equipment availability, and crew productivity. These models identify the critical path and suggest task sequencing that minimizes project duration.

Key Capabilities in monitoring progress :

- Historical learning: Models trained on past projects predict activity durations more accurately than purely heuristic approaches

- Weather coupling: Platforms like Procore incorporate weather forecasts to automatically adjust schedules for temperature-sensitive activities (e.g., asphalt paving)

- Resource leveling: AI tools optimize equipment and labor allocation to avoid bottlenecks while minimizing idle time

- Dynamic rescheduling: When delays occur, Artificial Intelligence rapidly generates updated schedules and identifies mitigation strategies

Collaboration platforms: Civil engineering project management platforms deploy intelligent task routing and automated status updates. However, the value proposition centers on reducing administrative overhead rather than fundamentally changing project delivery methods.

Performance Monitoring: Continuous comparison of planned versus actual progress enables the early identification of trends that may lead to delays, thereby improving the allocation of resources. Predictive analytics indicate whether corrective action is necessary or if the schedule float is sufficient to absorb the variance.

Risk Identification and Mitigation

AI-based risk management evolves maintenance from periodic assessment to continuous monitoring:

Historical Data Mining: Machine Learning algorithms analyze past databases to identify factors correlated with cost overruns, schedule delays, and safety incidents. Patterns invisible to human review emerge from statistical analyses of thousands of cases.

Predictive Maintenance: Deterioration models forecast when structural components will require intervention:

- Condition assessment: CV analyses of inspection images track crack growth, corrosion extent, and concrete delamination over time

- Remaining life estimation: Physics-informed models combine structural analyses with observed damage to predict the time to critical condition

- Maintenance optimization: Models schedule interventions to minimize life-cycle costs while maintaining target reliability levels

Practical Example: On high-rise buildings, ML models predict HVAC systems and elevator maintenance needs by analyzing field measurements (temperature, vibration, current draw). Scheduled component replacement before failure reduces downtime and emergency repair costs.

Proactive Safety: For bridges, ML-based proactive safety recommends enhanced protective measures (e.g., cathodic protection for reinforcement corrosion) when risk models indicate elevated failure probability. ML evolves maintenance from reactive repair to proactive risk reduction.

Large-Scale Infrastructures: In linear cases, such as railways and highways, Artificial Intelligence integrates geological records, geotechnical surveys, and progress monitoring to flag risks, including ground settlement, tunnel instability, or slope failure, before they impact operations.

Sustainability: Predictive modeling assesses the impacts of material selection on embodied emissions, energy consumption, and environmental footprint. Prediction enables informed trade-offs between performance, cost, and sustainability objectives.

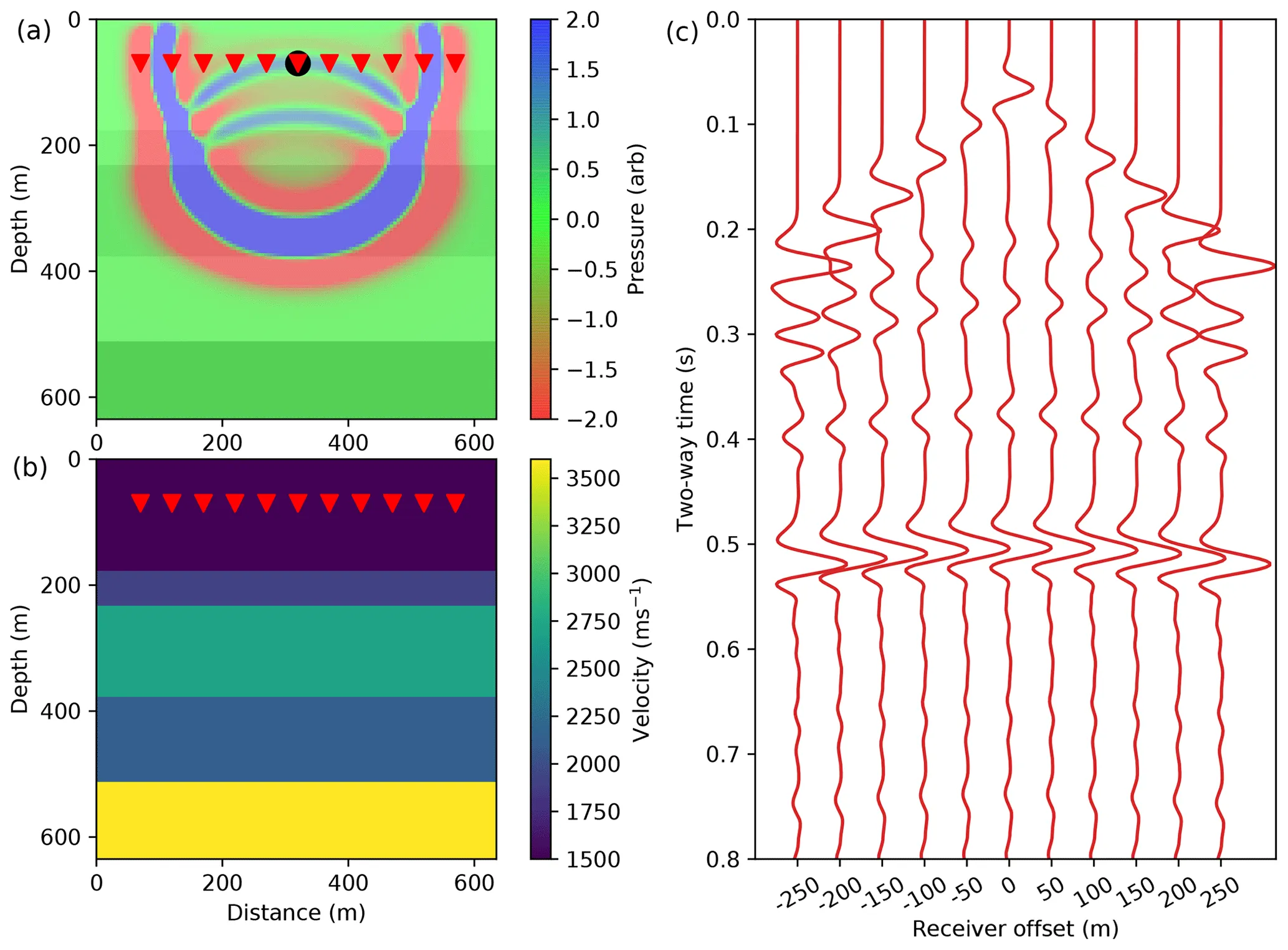

Computational Fluid Dynamics Applications

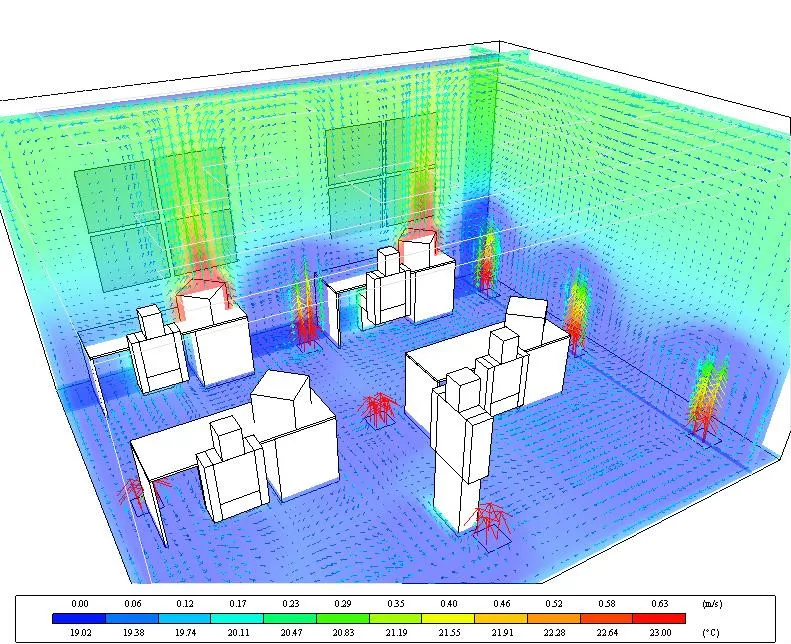

Computational Fluid Dynamics (CFD) solves the Navier-Stokes equations governing fluid flow using numerical methods. In civil engineering, CFD provides insight into airflow, water movement, and pollutant transport that would be prohibitively expensive to measure experimentally at full scale. Read more on a special AI-powered CFD tool for architects and civil engineers.

HVAC and Building Performance

CFD simulations optimize building ventilation strategies by predicting airflow patterns, temperature distributions, and contaminant dispersion. Engineers evaluate design alternatives virtually, selecting configurations that maximize occupant comfort while minimizing energy consumption.

Atrium Analyses: Large atria create complex thermal stratification and natural ventilation flows. CFD identifies optimal inlet/outlet locations and sizing to achieve desired air change rates without excessive mechanical ventilation.

Read more on data-driven Deep Learning, also applied to HVAC.

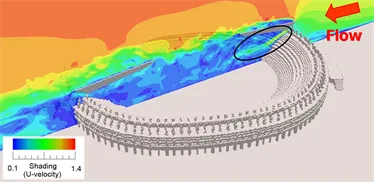

Hydraulic Structures

CFD enables detailed investigation of water flow through dams, spillways, weirs, and channels.

Civil engineers simulate with CFD:

- Discharge capacity: Verification that structures safely pass design floods

- Energy dissipation: Optimization of stilling basins to prevent downstream erosion

- Sediment transport: Prediction of deposition patterns that may reduce reservoir capacity

- Scour assessment: Identification of pier locations in bridges where flow acceleration may cause foundation undermining

Practical Implementation: CFD models calibrated against physical hydraulic models or prototype measurements provide reliable predictions for design modifications. However, turbulence modeling remains challenging: different turbulence closure models can yield significantly different predictions for complex geometries.

Flood Modeling

CFD-based flood inundation models simulate overland flow during extreme rainfall events. These models:

- Identify areas vulnerable to flooding under different storm scenarios

- Evaluate the effectiveness of proposed flood protection measures (levees, floodwalls, detention basins)

- Facilitate emergency response planning by predicting flood arrival times and inundation depths

Deep Learning Acceleration: High-fidelity CFD is computationally expensive. A single simulation may require hours or days. Neural network surrogate models trained on CFD results provide real-time predictions, enabling rapid scenario examination during flood events. Neural Concept’s platform demonstrates this approach, utilizing graph neural networks to predict flow fields from CAD models.

Digital Elevation Model Processing: The nonparametric nature of deep learning architectures makes them well-suited for processing irregular DEMs. Traditional interpolation algorithms struggle with complex terrain; in contrast, neural networks learn appropriate representations directly from training datasets.

Preservation of Cultural Artefacts

The article “CFD modeling for the conservation of the Gilded Vault Hall in the Domus Aurea” (reference https://doi.org/10.1016/j.culher.2003.08.001), supported also by the author of the present article, demonstrated the simulation of the virtual microclimate conditions inside the Hall with the Gilded Vault in the Domus Aurea, Rome. Researchers in Rome used a CFD finite volume method to solve the necessary equations and predict the detailed distribution of temperature, humidity, and air movement throughout the Hall. The simulation results inspired practical recommendations for conserving both the Hall and the Domus Aurea monument as a whole.

How is AI Helping Project Management?

For project managers overseeing construction projects, AI’s capability to process complex data represents a game-changer in how tasks are executed. It analyzes patterns across materials performance, project timelines, and construction methods, and it streamlines workflows that previously consumed weeks of manual operations.

This capability extends from foundation design optimization (where software evaluates soil-structure interaction scenarios in hours rather than days) to proactive maintenance scheduling that identifies material fatigue before it compromises structural integrity.

The result is measurable. Civil engineering firms save time for coordination, reduce overruns, and raise the reliability of delivered assets.

Smart Cities Development

Smart city development exemplifies this transformation on a large scale. Civil engineering projects are increasingly integrating AI from conception through operation, processing complex data streams from thousands of sensors that monitor everything from structural health to environmental conditions. Where traditional approaches required engineers to address problems as they emerged reactively,

Energy Management

Smart grid platforms analyze consumption patterns, generation capacity, and price signals to optimize the distribution of electricity. Barcelona’s CityOS integrates energy, water, and waste management data, enabling operators to identify inefficiencies and implement corrective measures.

Impact: Real-time optimization reduces peak demand, allows for more renewable energy integration, and lowers costs for consumers. However, benefits heavily rely on sensor deployment density and data quality.

Traffic Management

AI traffic controls adjust signal timing based on real-time vehicle detection and predicted congestion patterns. Singapore’s Smart Nation initiative demonstrates citywide deployment where adaptive algorithms reduce travel times and emissions compared to fixed-timing signals.

Technical Approach: Traffic prediction models use historical patterns, special event schedules, and weather forecasts to anticipate demand. Control software then optimizes signal timing, ramp metering, and variable speed limits to maximize network throughput.

Predictive Maintenance

Municipal asset management deploys AI to prioritize maintenance across road networks, bridges, and utilities. Models estimate condition degradation rates, aiding in resource allocation and enabling transition from time-based to condition-based maintenance strategies.

Economic Impact: Proactive intervention before failures occur reduces emergency repair costs and service disruptions. However, implementation requires comprehensive asset inventories and a historical condition dataset (prerequisites that many municipalities lack!).

Data-Driven Urban Planning

AI platforms analyze demographic trends, economic indicators, climatic and ecological factors, and transportation patterns to inform urban development decisions. Songdo, South Korea, utilizes simulation and AI tools to evaluate the impacts of proposed developments on traffic, air quality, and energy demand before approval.

Sustainability Alignment: Ensuring new assignments align with climate goals and enhance livability requires explicit incorporation of these objectives into planning algorithms. Without such constraints, optimization may produce economic efficiency but result in socially or environmentally problematic outcomes.

Technology Alignment

Infrastructure combines digital modeling, sensing, and computation to support evidence-based actions. Success depends on carefully curated, domain-specific training records that reflect standards, historical outcomes, simulations, and known failures.

BIM and GIS

Building Information Modeling (BIM) and Geographic Information Systems (GIS) provide the digital backbone for AI applications. BIM contains detailed geometric and attribute information for individual structures, while GIS situates these elements within broader spatial networks.

AI tools Coupling: ML models embedded within BIM platforms analyze design options, perform automated code compliance checks, and generate quantities. When connected to GIS, these models incorporate site-specific constraints, such as soil conditions, utility conflicts, and environmental restrictions, into the design optimization process.

IoT-Enabled Real-Time Monitoring

Internet of Things (IoT) sensor networks continuously measure structural response, ambient conditions, and operational parameters. Edge computing devices perform local processing, transmitting only anomalies or aggregated statistics to central platforms, reducing bandwidth requirements and enabling low-latency response.

Architecture: Cloud platforms provide scalable storage and computational power for training ML models on archival records. Trained models deploy to edge devices for real-time inference. This distributed architecture strikes a balance between centralized learning and decentralized execution.

Cloud and Edge Computing

Cloud and Edge Computing represent two complementary approaches for processing and managing data. Cloud systems offer vast centralized resources. Edge computing brings computation closer to where data is generated. The term “edge” refers to the boundary of the network where data is first produced.

- Cloud advantages: Unlimited storage, high-performance computing for model training, centralized data management, and easy collaboration across distributed teams.

- Edge advantages: Low latency for time-critical decisions, operation during connectivity loss, reduced transmission costs, and enhanced security by limiting cloud uploads.

- Hybrid approach: Most deployments combine both: edge devices handle immediate response requirements while syncing to cloud platforms for long-term elaborations and model updates.

Generative Design Workflows

Generative design explores design spaces defined by

- constraints (material properties, load requirements, geometric limits) and

- objectives (minimize weight, maximize stiffness, minimize cost).

The result is a set of Pareto-optimal results showing trade-offs between competing objectives.

- Engineers’ Role

- Software generates candidates; civil engineers evaluate manufacturability, constructability, and compliance with codes not captured in the optimization formulation. This human-software collaboration produces better outcomes than either could achieve independently.

Large Language Models in Documentation

LLMs like GPT-5 accelerate technical writing by generating draft specifications, reports, and correspondence. However, a human-supervised review is mandatory. LLMs occasionally hallucinate technical details that sound plausible, but are incorrect.

- Appropriate use cases: Formatting and structuring existing technical content, generating initial drafts for editing, and extracting key information from long documents.

- Inappropriate use cases: Making final technical judgments, performing calculations, or generating content without verification.

AI in Brainstorming and Innovation

AI excels at rapidly generating numerous ideas when prompted with constraints and objectives. For engineers working with large datasets, AI tools can identify non-obvious correlations and suggest hypotheses for investigation.

Many generated ideas will be impractical, infeasible, or previously attempted and abandoned. Critical evaluation remains essential.

Training Data for AI

To be helpful in the sector, training datasets must include:

- Methods and standards (codes, specifications, best practices)

- Project histories with outcomes (performance, cost, schedule)

- Physics-based simulation results validating predictions by models

- Failure case studies ensure models learn from mistakes

Current predictive systems trained on general internet sources lack this domain-specific knowledge. Developing industry-focused models requires collaboration to create comprehensive training datasets while respecting proprietary information.

Ethical Considerations

Models need representative records to avoid bias. Their results must be verified to prevent false outputs. Human oversight remains essential.

Security measures and privacy controls should be built in from the start. Quality checks ensure that updates don’t degrade performance.

Decisions must stay explainable, and energy use should be tracked to keep deployment accountable at every stage.

Bias and Dataset Representativeness

ML models trained on unrepresentative datasets produce biased predictions.

- Potential Risks: If the training datasets consist primarily of urban infrastructures, model performance will degrade on rural projects. If the dataset originates from a single geographic region, models may not generalize to other climatic or seismic zones.

- Mitigation: Ensure training datasets span the full range of conditions where models will be deployed. Document known limitations and validate performance on held-out test sets representative of deployment conditions.

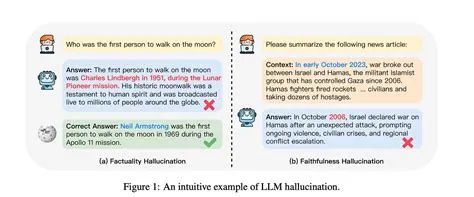

Hallucination and Generated Misinformation

Generative AI models sometimes produce outputs that sound plausible but are entirely fabricated. This phenomenon is called hallucination.

A hallucination occurs when a model outputs a plausible-sounding statement that has no grounding in reality or the provided sources. The model is not “lying”; it is predicting the next token that looks likely given its training, not checking the world.

- Intrinsic hallucination

- The model contradicts or invents facts about material that is present in the prompt or context.

- Extrinsic hallucination

- The model asserts facts for which there is no support in the prompt or training evidence (it invents new facts).

An AI might suggest a design approach using non-existent materials or predict outcomes based on spurious correlations. The risk is that implementing hallucinated recommendations could compromise structural safety!

How Can We Mitigate Hallucinations in Civil Engineering?

Here’s a quick guideline or “cheat sheet” combining practical detection guidelines, mitigations, and checklists:

Accountability and Liability

When an AI system contributes to a structural failure, liability allocation becomes a complex issue. Responsible parties may include:

- The engineer who deployed the AI tool without adequate validation

- The AI vendor states that the model had undisclosed limitations

- The organization that failed to implement appropriate oversight

Current Reality: Regardless of AI involvement, licensed engineers remain legally responsible for the designs and decisions they make. AI tools do not dilute professional liability: they’re considered aids to engineers’ judgment, not substitutes.

Privacy and Security

IoT sensors on infrastructure collect outputs that, if compromised, could reveal vulnerabilities or operational patterns that adversaries can exploit. Privacy concerns arise when monitoring systems track individual behaviors (e.g., vehicle movements, building occupancy patterns).

Safeguards:

- Technical Safeguards

- Encryption in transit and at rest, role-based access controls, anonymization of personally identifiable information, and secure decommissioning of retired sensors.

- Policy Safeguards

- Clear governance policies specifying what output is collected, how it’s used, who has access, and retention periods.

Overreliance on AI

Excessive trust in AI predictions without verification creates risk. Users must maintain competence to evaluate outputs critically.

Education must evolve to include AI literacy, i.e., understanding how ML models work, their limitations, and appropriate validation methods.

Intellectual Property

Ownership of AI-generated designs is legally unclear. If an algorithm generates a novel structural configuration, does the IP belong to the engineer who ran the algorithm, the software vendor, or is it unpatentable?

- Current Approach: Contractual agreements should explicitly address IP ownership for AI-generated content. Review the terms of service for each platform to understand the rights granted and retained.

Social Equity

AI-driven decision-making can perpetuate or exacerbate existing inequities, which can further complicate construction progress.

- Risk: Predictive maintenance models trained on inspection records may prioritize high-traffic urban infrastructures, systematically underserving rural or low-income communities unless equity is explicitly incorporated into the objective function.

- Mitigation: Define performance metrics that include equity considerations. Validate that model recommendations don’t systematically disadvantage specific communities.

Transparency and Explainability

Deep learning behaves like a black box. Predictions appear without visible reasoning. For critical assets, decisions must be traceable.

Attention maps can reveal which inputs most influenced a prediction, SHAP values quantify the impact of each variable, and surrogate models reproduce the behavior of a black box with more straightforward rules.

Clear documentation, human oversight with the authority to override, and communication in plain technical language ensure the process remains accountable.

Environmental Responsibility

The deployment of AI itself has environmental costs, as training large models requires significant energy consumption. Its applications in civil engineering should provide net environmental benefits, accounting for:

- Embodied carbon in selected materials

- Energy consumption of recommended solutions

- Life-cycle environmental impacts

Best Practice: Life-cycle assessment should evaluate the environmental consequences of AI-driven design decisions, not just immediate performance metrics.

Future Directions

Maintenance will rely on continuous condition forecasting, replacing fixed schedules. Designs will embed carbon impact, enabling real-time trade-offs. Critical assets will have live digital counterparts for simulation.

Professionals should combine structural expertise with computational fluency to operate effectively with these intelligent assets.

Predictive Maintenance as Standard Practice

Within a decade, reactive maintenance will be considered obsolete. Continuous monitoring, coupled with AI-driven condition forecasting, will enable just-in-time intervention, maximizing asset life while minimizing costs and service disruptions.

Example: Singapore’s bridge management system detects micro-cracks through strain gauge analysis before they become visible to inspectors. Maintenance crews receive work orders when degradation rates exceed thresholds, not on fixed schedules.

Enabling Technology: Wireless sensor networks with 10+ year battery life, edge computing for real-time computation, and cloud platforms integrating previous outputs from thousands of structures.

Carbon Accounting

Every design decision has carbon consequences. AI tools will calculate embodied emissions for every component, presenting engineers with explicit trade-offs between sustainability, performance, and cost during the design process, rather than as an afterthought.

Technical Challenge: Life-cycle assessment databases must integrate seamlessly with design software to facilitate efficient and accurate analysis. Industry collaboration is required to develop standardized carbon accounting methodologies.

Digital Twins Becoming Standard

High-value infrastructures will coexist in both physical and digital forms. The Crossrail in London demonstrates this approach: a continuously updated digital twin enables operators to simulate emergency scenarios (such as fire, flooding, or structural damage) without disrupting service.

Value Proposition: Risk-free testing of operational changes, predictive performance modeling under future conditions, and forensic investigation after incidents.

Barrier: Creating and maintaining accurate twins requires sustained investment. The business case is clear for critical infrastructures but questionable for routine structures.

Adaptive Infrastructure

Infrastructures will respond autonomously to changing conditions. As existing promising examples:

- Barcelona’s traffic signals already operate this way. It has no fixed timing sequences; instead, it operates continuously in response to current demand.

- Amsterdam’s water distribution system detects leaks and reroutes flow automatically.

Trajectory: Isolated intelligent systems will network into city-scale cyber-physical systems. Coordination across domains (traffic, energy, water) will enable system-level optimization impossible with siloed control.

Caution: Increased connectivity creates cybersecurity vulnerabilities. Robust security architectures must evolve in tandem with smart infrastructures.

Evolving Professional Skills

The next generation of civil engineers will be computational practitioners as comfortable with Python as with FEA. The boundary between software and civil engineering will become increasingly blurred, and professionals will need to be fluent in both domains.

Educational Imperative: Universities should integrate statistics and ML into civil engineering curricula. Practicing civil engineers require continuing education to remain relevant.

Specialization: Not every engineer needs to become an expert in ML, but every engineer needs literacy sufficient to deploy AI tools and critically evaluate their outputs intelligently.

Conclusion

Data-driven methods have moved from speculation to real-world deployment in infrastructure design and construction.

The technology complements human expertise rather than replacing it. Computational models excel at pattern recognition and rapid optimization, while domain specialists contribute judgment, accountability, and contextual understanding.

Current use cases show tangible value in structural monitoring, design refinement, and project coordination. Yet, effective adoption demands realistic expectations, proper validation, and awareness of technological limits.

The field now faces a decision: actively guide the introduction of intelligent systems to strengthen professional practice, or passively adopt commercial deployments without scrutiny.

The first path requires investment in technical competence and ethical reflection; the second risks weaker results and loss of professional influence.

Practitioners who unite classical engineering skills with digital fluency will define the next stage of the discipline.

FAQs

What are the 5 top applications of AI?

AI in civil engineering enhances structural health monitoring, detects cracks early, predicts maintenance needs to optimize repairs, enables generative design to conserve materials, improves geotechnical modeling, and reduces delays by 10-20%.

Will AI replace humans in the design of the built environment?

Machines can streamline workflows, but lack the judgment for complex decisions. Human professionals remain responsible for ensuring safety, upholding ethics, and maintaining compliance. Licensed engineers retain ultimate accountability. The profession will evolve, with effective AI users replacing those who are not.

How should engineers learn Machine Intelligence for civil engineering applications?

Begin with Python, probability, and linear algebra. Solve practical problems using civil engineering datasets like structural monitoring, borehole logs, or traffic counts. Enroll in online courses (Fast.ai, Coursera, MIT OCW) for structured learning. Practice with libraries such as scikit-learn, TensorFlow, and PyTorch to build skills. Collaborate with experts to learn workflows and best practices.

What is AI-powered predictive maintenance, and how does it work for infrastructures?

Predictive maintenance employs ML models trained on field data to forecast failures. These analyze historical data to link parameters with remaining useful life. Real-time data predicts maintenance needs, enabling condition-based repairs rather than relying on fixed schedules. For bridges, this technology detects issues months earlier than traditional inspections, allowing for cost-effective preventive maintenance rather than costly emergency repairs.

How do AI and traditional methods compare in geotechnical applications?

Traditional geotechnical methods employ mechanistic models that have been validated over decades, providing a reliable physical understanding within their respective ranges of application. Machine Intelligence approaches learn from CPT soundings, borehole logs, lab tests, and performance data, capturing complex, nonlinear relationships that are difficult to express analytically but are evident in previous outputs.

What is the best method in geotechnical engineering, traditional or AI?

Neither is superior in general. Traditional methods provide interpretable results and work with sparse data. AI handles complexity and big data, requiring training sets. Best practice: Utilize traditional methods for design calculations and code compliance, and deploy AI to enhance site characterization, identify anomalies in monitoring data, and refine empirical correlations through AI.

What is the documented impact of AI on project cost and schedule?

Documented impacts include a 10-20% reduction in schedule due to optimized sequencing and delay mitigation, as well as 5-15% cost savings through improved resource utilization and reduced rework. Earlier problem detection also reduces the need for late-stage changes.

Which software platforms incorporate AI for civil engineering?

Major platforms, such as Autodesk, Bentley, Trimble, and Procore, offer schedule optimization, digital twins, automation, and ML-based project management, including forecasting. Tools like Ansys and Abaqus support FEA workflows. Many startups focus on drone inspection with computer vision.

How does AI compare to traditional civil engineering methods overall?

Traditional methods are dependable and understandable. Regulators also approve them. AI offers automation, pattern detection, and learning. So the two approaches complement each other: established mechanisms and Machine Intelligence models to find patterns beyond current knowledge.

What does AI implementation cost?

Costs vary by scope. Pilot cases can cost €20,000-100,000 (utilizing open-source tools, limited deployment, and internal expertise). Department-level deployment could cost €100,000 100,000-€ €500,000 (for commercial platforms, training, and initial integration). Enterprise deployment can reach €1M-10M+ (including comprehensive IoT networks, cloud infrastructure, and ongoing development)

What are the hidden implementation costs?

Hidden costs include staff training, workflow modification, and ongoing maintenance. The budget should consist of infrastructure components (such as sensors and connectivity) in addition to software. Return on investment depends on the project’s value and the consequences of failure. Justification is strong for critical infrastructure, weaker for routine structures.

How do engineers integrate ML into existing workflows?

Engineers adopt machine learning by first identifying problems where it clearly outperforms traditional methods. They start with small pilot projects on non-critical tasks to gain hands-on experience. Collaboration with AI specialists and IT teams ensures alignment of objectives, data infrastructure, and deployment processes. Each model is validated against established benchmarks, then documented and standardized for broader application.

What are the main benefits of AI adoption?

It enables earlier detection of issues, automates repetitive work, and supports better decision-making. It continuously monitors system performance. It expands the range of design options that can be explored.

What are the main challenges?

Key challenges include securing high-quality inputs, integrating AI with legacy systems, addressing a skills shortage, validating outputs, rising cybersecurity threats, and regulatory ambiguities that impact trust and adoption.